- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

My memory is gone after binary file write operation on Linux RT

01-11-2019 12:24 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi,

I'm trying to log some data on my Linux RT. However, I notice that by doing more and more file write operations, my free physical memory available on Linux RT becomes less and less. I read some thing similar in Memory Lead or Not in TDMS thread. But I'm not sure whether this is a same thing. I want to know whether there is reasonable or a bug and whether there is an approach to get my memory back after file write operations. Please refer to the SimplifiedFileGenerateMemoryLeak.vi in the attached zip file.

My steps to observe the issue: (Note that I've tried it on cRIO-9033 and sbRIO-9607.)

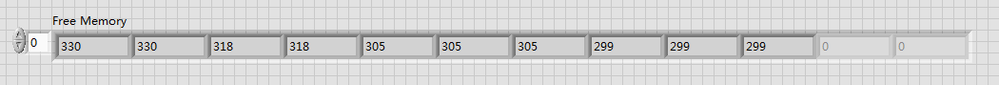

1. Run the VI. Check out the array values, which reflects the Free Memory left.

Use WinSCP to check that five dat files with size 6400KB are generated in a sub-folder under /home/lvuser.

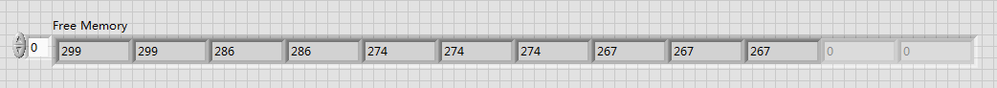

2. Don’t do anything else and run the VI again. Check out the array values. Note that the first value is same as or close to the last value in step 1.

Note: You can try to do this several times and should find similar behaviors.

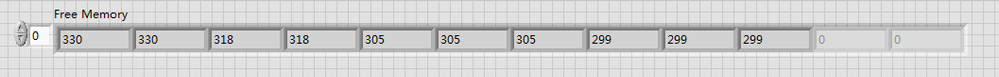

3. Now. Delete the files already generated through WinSCP. Run the VI again. You should get some results similar to what you got in step 1.

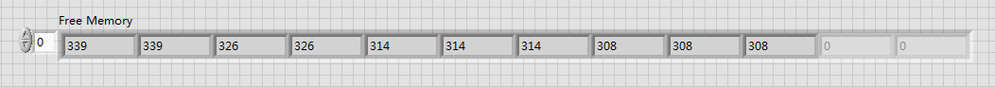

4. I try one more step. After I do step 1, I reboot the target and run the VI after restart. The result is shown as below.

Thanks,

Richtian

- Tags:

- RT Linux Memory

01-11-2019 11:20 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Not sure if it will help your memory issue, but you do not need that FOR loop in the GenerateBinaryFile.vi. But, I have seen some weird things when it comes to memory leaks, so it is worth trying.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

01-11-2019 10:04 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks for your suggestion. But this won't be a practical thing for my case. The files that I attach here are the simplified cases. In my real application, the file operation is writing something built from cached data. But, of course, I may always build up the stuffs to write in memory first and then write them into the file in one call as you suggested. But I'm not sure which one is better or recommended? Any further comments?

01-12-2019 07:15 AM - edited 01-12-2019 07:20 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

First, your use of memory leak is possibly not correct in a technical sense. I"m not saying that this couldn't be one, but LabVIEW has a dynamic memory allocation that may not always reuse a memory buffer right away after it was marked for deletion. Yes LabVIEW does not allocate and free memory blocks all the way to the OS memory manager every time, but maintains its own list of memory blocks that it has used but can be reused again. And while an array needs to be one continuous memory block it can very well be that at the time LabVIEW comes around to need your memory block for the audio array, the allocation from the previous execution has been fragmented by other smaller memory request, so that there is no continuous block of memory with the necessary size anymore in the cache and LabVIEW has to request a new block again from the OS. This is not a memory leak in itself, LabVIEW still knows about the allocated memory and will reues it whenever possible, but its fragmented and can't be reused for the next big allocation.

That said I'm a little sceptical about your use of the System Session to retrieve the free memory. I have seen some weird situations with that where the execution of property node seems to allocate a new refnum every time, without releasing the previous refnum properly. And even if the previous refnum is released it still will make the chance for fragmenting your memory much higher.

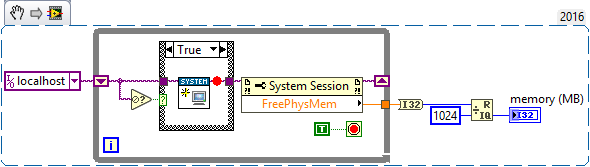

So can you try with this VI instead for querying the memory? It will also tend to speed up the execution of the FreePhysMem node as it won't need to lookup the refnum each time based on the name.

01-13-2019 07:41 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hey, Rolf

Thanks for your suggestion. I tried it and found no difference.

I agree with you that this should not be a kind of memory leak from LabVIEW. After checking with several Linux guys, I suspect that this is the expected behavior based on memory management scheme on Linux. I'm posting it here because I want to get insights from more experienced LabVIEW experts related to this topic. I just want to make sure that my RT application can run stably even with these phenomena.

Thanks,

Richtian