- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Lowercase to Uppercase and Uppercase to Lowercase

12-13-2018 11:38 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

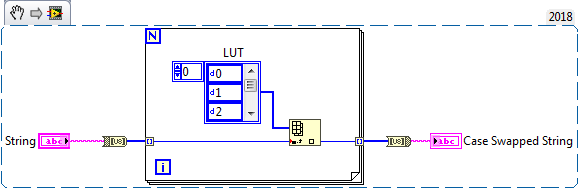

To quote Monty Python "And now for something completly different", how about using a lookup table with the UC and LC swapped in the LUT.

12-13-2018 11:40 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

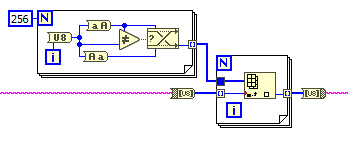

In Range and Coerce is just slightly slower than Is Equal? to (4 OR 5) by about 20%, so you could gain a teeny amount of speed there. They're both blazingly fast though, running 100000000 numbers through either of them took like a quarter of a second.

12-13-2018 11:45 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

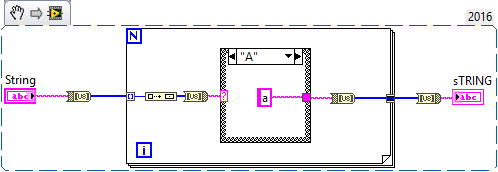

Or something even more differenter:

😉

12-13-2018 11:48 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@BertMcMahan wrote:

Or something even more differenter:

😉

Why have the build array and byte array to string, just wire numeric to the case terminal. It won't be as readable since it doesn't have ASCII mode but it does have Hex.

Unofficial Forum Rules and Guidelines

Get going with G! - LabVIEW Wiki.

16 Part Blog on Automotive CAN bus. - Hooovahh - LabVIEW Overlord

12-13-2018 12:06 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Even if you want the string for readability, the Rube Goldberg (built single element U8 array... Byte array to string) combo could be replaced by a simple typecast.

12-13-2018 12:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Yes, a LUT would be the way to go in deployed code. That's what I usually do.

(... But unless you want to create the LUT by hand, you still need one of our algorithms once :D)

12-13-2018 12:31 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@altenbach wrote:(It is a little-known fact that many string functions work perfectly on U8)

I did not know that! I want to change my answer to this 😛

=== Engineer Ambiguously ===

========================

12-13-2018 01:38 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@paul_cardinale wrote:

OK. But I'm usually more concerned with speed that with size and this is about 30 times faster than this:

True, but the LUT is still 4x faster. You can even create it on the fly and it will be constant folded without any speed penalty. 😄

12-13-2018 01:43 PM - edited 12-13-2018 01:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Hooovahh wrote:

@BertMcMahan wrote:

Or something even more differenter:

😉

Why have the build array and byte array to string, just wire numeric to the case terminal. It won't be as readable since it doesn't have ASCII mode but it does have Hex.

I just wanted to make something intentionally Rube Goldberg, I didn't mean for it to be a particularly "good" solution 🙂

12-13-2018 03:04 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@altenbach wrote:

@paul_cardinale wrote:

OK. But I'm usually more concerned with speed that with size and this is about 30 times faster than this:

True, but the LUT is still 4x faster. You can even create it on the fly and it will be constant folded without any speed penalty. 😄

On a side note, the FOR loop can be parallelized, but it makes it actually slower for smaller strings. However, for gigantic strings is a way faster that the same code with a plain FOR loop (e.g. 1k characters: 10x slower, 1M characters: ~4x faster, 10M characters: ~6-8x faster) (this is on an old 16 core dual Xeon)