- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Looking for a way to create a linked file in Windows from multiple existing files

05-01-2018 06:35 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

So hear me out. Lets say I have two large files on disk. These files will be merged at the end of a process, but I don't want to wait for the end of the process to merge some of them for convenience of reading them. I could copy the two files, then merge them, then get rid of the merge, but I'm I feel like that is a waste of File I/O operations, especially if the files are large. I could work with both files independently and when I get to the end of one file open the next. But what would be simpler would be to make a linked file, or a virtual file. A file that doesn't really exist and is just a link to a real file. But the trick is I don't want this link to be just one file, I want this linked file to concatenate with another one.

C:\Temp\1.tdms - 1GB file

C:\Temp\2.tdms - 1GB file

C:\Temp\Merged.tdms - linked file that when opened has the contents of 1.tdms, and 2.tdms.

Is this possible? I searched around and only found references to mklink, junctions, and other ways of mapping real files to another virtual place on disk. This is part of what I want but I'd also like to combine the files in a virtual sense. Any thoughts? As I said I could combine them so Merge.tdms is a real file at 2GB in size, but making that copy will probably take a while. And I could write code to read from 1.tdms, then when it gets to the end read from 2.tdms but that complicates functions quite a bit, especially when there is likely a 3.tdms or 4.tdms.

Unofficial Forum Rules and Guidelines

Get going with G! - LabVIEW Wiki.

16 Part Blog on Automotive CAN bus. - Hooovahh - LabVIEW Overlord

05-03-2018 03:44 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I really don't see how that would work. Both TDMS files have headers in them. So physically concatenating them would simply result in an invalid file. But even if both data files where "raw", I've never heard of a way to concatenate them without actually adding the data of the 2nd file to the first.

My solution would be (as always) to do the abstraction in a class. So I'd make a parent class "data file reader". Maybe it would have a child "single file data reader" and a child "multiple file data reader". All the magic would happen in the classes, so the entire program would not need to know if it's looking at a single file or not. The multiple file class could contain multiple single file objects.

If there is a "data file writer" class, a stream from the reader to the writer would create a single file from multiple files. Data file writer could also have two children: single file and multiple files. One for saving multiple to single, one for storage during measurement.

I've done this a lot. Not with single\multiple, but for instance with txt\binary files (or even txt\bin1\bin2). It's been very convenient to me. In your case it could be more difficult, but all complexity would be contained nicely.

05-03-2018 03:57 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Knowledge can be a curse, and abstractions can be a hindernis.

Yes, according to any modern file system, it should be possible to add a pointer to the end of the first file pointing to the start of the second. You are probably aware of this, hence your curiosity and correct assumption that a copy-merge is actually doing more work than absolutely (theoretically) neccessary. This is of course assuming that simply appending both files makes sense logically, which with TDMS, I'm not sure is the case at all.

This is generally not exposed on the OS level as most users would just mess up. So the Abstraction fromt he OS point of view won't allow you an easy path to do this and every other avenue is error-strewn. So you're in the position knowing that it's theoretically possible (if likely nonsensical logically) but can't because someone has designed the abstraction in a manner which won't allow that level of fine-grained control.

There may be tools which allow you to do this somewhere, but I'd just read both files and write them.

05-03-2018 06:53 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

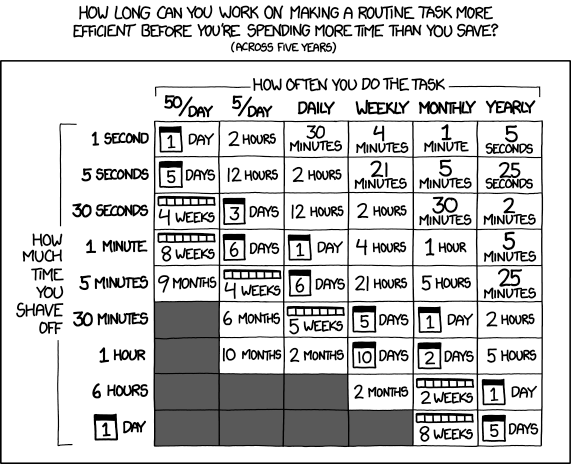

Oh man, sorry Randall. Forgot to add the proper reference to xkcd, whose webcomics I refer to on a regular basis.

Graphic taken from: https://xkcd.com/1205/

05-03-2018 07:50 AM - edited 05-03-2018 07:50 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Concatenating TDMS files is a feature of the file format. Since the TDMS file is written in chunks, the header information is repeated with each chunk, which is why fragmentation may occur if header information is written too often. Because of this design choice you can concatenate two TDMS files into one just by appending it and the data is read properly. Quite handy.

Yes there are other ways of doing this, and I'm not 100% against them. I just recognize that the easiest way from a developer standpoint, would be to have this tool which creates a virtual file, and now all normal TDMS reading can be done using the already existing primitives. Otherwise I now need to write an abstraction layer, which basically does work on multiple files but is referenced like it is one. Like for instance I may have a channel I want to read but there is too much data to read it all at once. So I will use the TDMS function to tell me how many values there are in the signal. If it says 100,000 maybe I load the first 10% into a table, and if the user scrolls down, I load the next 10%. With this spanning an unknown number of TDMS files, doing a query like how many samples are there, would involve more work. Similarly loading 10% of all samples may span an unknown number of files. Do-able for sure, but a magic command line call to create a virtual spanning file would solve this issue.

Unofficial Forum Rules and Guidelines

Get going with G! - LabVIEW Wiki.

16 Part Blog on Automotive CAN bus. - Hooovahh - LabVIEW Overlord

05-03-2018 07:59 AM - edited 05-03-2018 08:02 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Hooovahh wrote:

Concatenating TDMS files is a feature of the file format. Since the TDMS file is written in chunks, the header information is repeated with each chunk, which is why fragmentation may occur if header information is written too often. Because of this design choice you can concatenate two TDMS files into one just by appending it and the data is read properly. Quite handy.

I'm definitely not confident that this is 100% accurate given the stated parameters of your original question.

I waded through the TDMS file specification once because I was observing some terrible speed issues which I could not understand. My understanding (limited as it is) is as follows:

The header parameters (such as file offset) are valid only within the defined terms of a single file object. Appending two different file contexts will null and void the pointers (expressed in bytes, not disk location) because the zero offset will have changed. Because of this, you will need to re-parse all pointers within the second TDMS file.... simple appending will not work. Using a TDMS function to append to an existing file will do the pointer acrobatics for you (it will apply the old file context to the new data). Simply appending on a File system level knows nothing of the internal mumbo jumbo and so the result will not be equivalent.

I realise this completely flies in contradiction to the informaiton found at the link you provide...... I'm willing to learn more..... am I perhaps confusing TDM and TDMS?

05-03-2018 08:14 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I'm sorry to bug on about this, but I'm very interested in this idea. I instincitively thought such an operation would not yield a valid file. Seeing how I ran into performance problems with TDMS writing in the past (exponential slowdown with the number of individual packets being written), the idea of actually writing multiple files and concatenating later could help me control the exponential growth. By auto-restarting a new TDMS file every N packets instead of appending the old one, I might be able to maintain speed as required.

BTW: Writing a TDMS file in advanced mode parses each packet from beginning to end before working out where to write the next data. This slows things down a lot. It's not a file format problem, but a code implementation issue. Splitting into several files, while not an elegant solution, could serve as a performance stop-gap.

05-03-2018 08:45 AM - edited 05-03-2018 08:46 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Oh yeah merging two files together is a great way to avoid some of the issues found with TDMS. I do have code which can setup a logging module to split into a new file at given time intervals, or file sizes. With this concept I will have an RT system log into a temp location and make new files as needed. When I start a new file I put the old file into a Done folder. My host OS monitors this folder and if it finds a file sends it over to the host. This way the RT doesn't just fill up with data, and when a test stops most of the data is already on the host. Then the host just combines all of these files when the test is done and generates a report. My reasoning for looking for this virtual file feature, is so that I could read the partial data sent over, before the test is over and the merge and report generation starts. Here is a slide from a TDMS presentation I gave where I mention this feature.

That being said I think I can come up with a decent enough solution without needing virtual files. Thanks for your help.

Unofficial Forum Rules and Guidelines

Get going with G! - LabVIEW Wiki.

16 Part Blog on Automotive CAN bus. - Hooovahh - LabVIEW Overlord