- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Logging large LabView Objects.... What would you do?

12-06-2016 07:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello all,

I'm working on an application that gets a lot of input from different sources: 30 channels ultrasound, 30 optical tracking markers and these inputs are tied together with some variables that help the postprocessing of the data... in an LVOOP object. Going LVOOP has helped a lot during development because the data is nested in the hierarchy. But now it comes to logging, and I'm a bit at a loss and I hope you can help me.

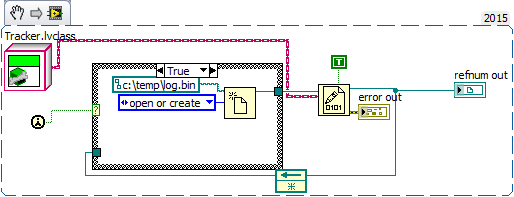

I did a small test to see how much data I'm gathering, and it boils down to ~5GByte per minute of logging. This is how I do a very crude logging action, which is of course dead ugly, but useful to get the rough picture:

Now, I run into a problem; I cannot read the data back to see whether it made sense at all what I wrote. The ReadBinaryFile takes the number of bytes as input argument, and I don't know what the byte size is of the object. Even if I did, the "cast" operator does not take LVobjects as input arguments.

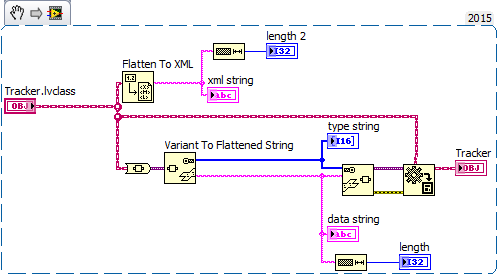

Another approach I thought of was to cast the object to a variant, flatten that to string, and write the strings to file. That would lead to even more data, but I have not tried it yet. The process to go from object to string and back is depicted below:

I would log the 'data string', because I can read those back with "read from file".

In initial tests I used TDMS, but then the data was not as nested as it is now, and we only sampled once every second where we're now doing acquisitions at 20Hz.

My question is: how do you deal with stuff like this? How do you log large, complex datasets? We only need to record 1-10 minutes (experimental data), I'd be interested in what solutions you have come up with.

12-06-2016 07:38 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Don't try to save the LVOOP object, instead create a DataSave method in the class and use it to save the data in a format more suitable for your testing purposes. Binary would be now feasable since you know the size of the data stream directly.

Certified LabVIEW Developer.

12-06-2016 07:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

83 Mbytes/second to disk?

That is an impressive disk subsystem.

I have not recently tried to write and read an instance of a LVOOP class from disk recently (LV 8.2 was maybe the last time I used that feature) but it looks like you can still just wire the LVOOP class wire the read binary file to read the class isntance from file. Have yo tried that?

Otherwise...

For demanding lggoing needs I will make sure the data is in the most condensed and concise form to write and read from diska as quickly as possible. While raw binary files are traditionally the fastest, TDMS has features that will provide streaing to disk.

I do not know if any of the above helps but that I all I have to offer this morning before the coffee starts to work.

Ben

12-06-2016 03:34 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

As Ben points out, you just need to tell "Read from Binary File" what the Object class is; you don't need to know the number of bytes.

12-07-2016 02:49 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@drjdpowell wrote:

As Ben points out, you just need to tell "Read from Binary File" what the Object class is; you don't need to know the number of bytes.

Not that it is a good idea for the quest of this thread, the "read Object" from file option has a rather interesting built-in feature in that LVOOP can track edits of the object's private data definition and can read the object data from a file written before the private data was changed. Built-in backward compatability.

Ben

12-07-2016 03:37 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I would also take into account convenience of data analysis after the log is done. How are you going to find your way in that GBs of data to process them? I guess you have some markers to find events, now you need to scan through all data to find required information

I would separate big arrays of 30 ultrasound channels from other data - stream them into file with predefined size ("Set file size.vi" or "Tdms reserve file size" before writing data). Then if second "events" file says me - something I need happened at second 23.51, I can calculate data file offset and read only small part of data.

Also, how much extra data (channel names, temporarily variables, etc) does class store with _every_ write? 80MB/s is it data size estimate, or real write as shown?

+100 to Mythilt: method in class, writing data in compact format

12-08-2016 04:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello Alexander,

We want to do some realtime processing (motion tracking) on this data, but we cannot do it real-time now. That's why I write everything to file now, in the end we'll just process all input data and extract the motion features, and maybe in a few years we know exactly what is the minimum data needed, or computers have become so fast that I do not need to worry anymore.

For now, this is a research project where I've gone great lengths to get all my precious data, and by investigating several ways of post-processing we'll learn what data we really need. Until that time I'll have very large log files without clear 'events' that I'm interested in.

I'll reply to the rest of you after my next meeting 🙂

12-08-2016 04:41 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Why would you cast it to variant and then flatten to string?

Those strings aren't intelligible. They're casting so you can send strings around and get different data types from point A to point B. It's like saying "I'm going to cast to variant and then cast again to a less efficient variant."

If you're casting, only do one or the other. If your transmission method requires strings, use that. If not, use variants. Some quick benchmarking should show you the variants tend to outperform the strings. But, variants aren't as useful if you're going to do something like send them across a VISA connection, as an example.

12-08-2016 02:36 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I'm not sure I understand what you mean by "nested" data, but in any case, you mention what I think is your best solution - write to a TDMS file. Especially if you are simply logging raw data for later processing, and <100 "channels" at 20Hz is not very much really (I'm not sure how you get 5GB/minute from that).

12-14-2016 05:02 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

As others have said - don't try to write the class object directly to a file. Take the data you need out of the class with a 'to <string/binary/array> data' method and then write that data to file (e.g. binary, tdms, CSV etc.). As others have mentioned, objects contain a bunch of other additional metadata which carries a bunch of overhead.