- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

LabVIEW2012 has long execution time to write string than LabVIEW2011 on XP SP3

07-29-2014 06:57 AM - edited 07-29-2014 07:03 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@ELI2011 wrote:

I still want to know the reason why LabVIEW 2012 has this difference from LabVIEW 2011, that if write large size string to front panel indicator in for loop, it has long execution time.

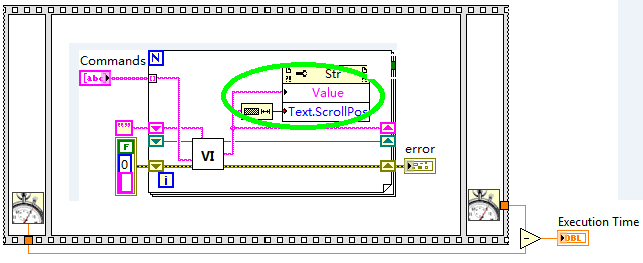

Juding by the picture you included, your code is not accurately computing execution time anyway... So what basis do you have to conclude this?

Are you seeing noticable lag/delay on the front panel output?

Going off of the timing calculations you are currently trying to do is just silly. You don't show us where you initialize the "start time". You also have the timing calculations in a seperate parallel loop for some reason -- and on top of that, you are mindlessly overwriting the timing calculations over and over during each iteration. This will eat up a ton of CPU time and will slow down your entire VI execution.

EDIT:

Per altenbach's suggestions, try something like this: (*Note: This was poorly drawn in Microsoft Paint, but it should get the idea across, I hope.)

If someone helped you out, please select their post as the solution and/or give them Kudos!

07-29-2014 08:35 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Also, with 2012, it works fine if change the OS language to English from Chinese XP.

So, can we focus on this difference behavior between LabVIEW 2011 & 2012 works on Chinese XP OS?

"I think therefore I am"

07-29-2014 08:40 AM - edited 07-29-2014 08:41 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@ELI2011 wrote:

My concern is this same source code works fine with LabVIEW 2011 on Chinese XP, but has doubled execution time after upgrade to LabVIEW 2012.

Also, with 2012, it works fine if change the OS language to English from Chinese XP.

So, can we focus on this difference behavior between LabVIEW 2011 & 2012 works on Chinese XP OS?

There are probably a good amount of changes between LabVIEW 2011 and LabVIEW 2012. For instance here is a list: http://zone.ni.com/reference/en-XX/help/371361J-01/lvupgrade/labview_features/

But that is irrelevant either way. You have provided absolutely no proof that the code runs any slower on LabVIEW 2012. Your current VI, as-is, does NOT calculate execution time. Please tell me how you determined that it runs slower.

If you actually calculate valid execution times like we demonstrated, then maybe we can suggest reasons why (if at all) it runs slower.

If someone helped you out, please select their post as the solution and/or give them Kudos!

07-29-2014 09:53 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Here is the way how to calculate the execution time: the test program works in a state machine (while loop + case structure), it has following steps include in case structure, that is "Initialize" -> "Testing" -> "Error Handle" -> "Database" -> "Complete".

In Initialize step, it record the start time in a way show in below figure(I do not have source code in this laptop, but give a sample here, hope it can make sense):

Then in Testing step, show in below figure, it will use "current time" substract "start time" as "testing time", and it will updated in each 10ms.

After all commands execete completed, the trigger "startup" became false, and it will stop the time calculation loop. We will get the final test time then.

Now this LabVIEW 2012 code works fine on English XP & Win 7 OS, the execution time is about 145 seconds, but on Chinese XP OS, the execution time is about 300 seconds.

The same code run with LabVIEW 2011 on Chinese XP OS, the execution time is 145 seconds.

I asked this question to NI application engineer located in Shanghai, they think that maybe a bug, but they can`t tell the root cause, that is why I put the question here.

I highly appreciate your response!

"I think therefore I am"

07-29-2014 10:08 AM - edited 07-29-2014 10:11 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

It could very well be a bug, and unfortunately, as I don't have Chinese versions of LabVIEW I cannot attempt to replicate this.

The point I was trying to make, is that your code is NON DETERMINISTIC and the timing calculations are INACCURATE. Granted the behind-the-scenes of LabVIEW isn't always what it seems, but usually parallel loops execute in different threads. As a result, many things could happen:

1. The top loop executes first and finishes before the second loop even gets a chance to run (since you flag the "timer" loop to stop when the above loop does.) This would result in the fastest execution

2. The bottom loop could execute a bunch of times before the top loop even gets a chance to run. This could add a lot of time.

3. Both loops could intermitantly run. This could cause lots of context switches that add time.

4. They could theoretically run at the same time depending on the cores of your CPU.

... And so on.

This could be affected by simple things such as background processes, number of cores in your CPU, number of threads different versions of LabVIEW allocate to optimize a program, etc, etc.

Those are all over-simplified, but as you can see there are many ways things could go wrong outside of LabVIEW itself. (Mostly due to the Windows OS.). This is why altenbach's recommendation was so good. Not only does it avoid many threading issues that may arise, he also has a way that will run MUCH faster than the average case with your code. (Even if they both run simultaneously, your bottom loop will continually "count" as the time progresses in your program. His computation calculates execution time more accurately and removes any repeat calculations.

If someone helped you out, please select their post as the solution and/or give them Kudos!

07-29-2014 10:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I pointed out the benchmarking flaws many days ago, so I assume that this is no longer an issue.

We still don't know the typical loop rate of the code. If it is really fast, I would only set the scroll position a few times per second. It is not economical to hammer property nodes like that.

So the main difference seems to be language version. Are you displaying Chinese characters?

07-29-2014 10:28 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Do you have other solution to record the accurate process time?

"I think therefore I am"

07-29-2014 10:41 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Once you add delays, the wait contributes to the loop rate.

How can you admit that the measurement is flawed, but still insist that the speeds are different. If performance really matters, you need to code this differently. A property node in an inner loop is expensive because they are synchronous and require thread switching

07-29-2014 10:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

It is not the Chinese characters, but the string size caused doubled execution time! As I mentioned before, the program has about a hundred commands to execute, each of them will output some string, but three of them will output large size string (about 160kB), it caused the later commands execute very slowly! But it will speed up if I remove these three commands.

I create a sample code that cycle write large size string to front panel indicator on Chinese XP and English XP, it can duplicate this issue.

"I think therefore I am"

07-29-2014 11:31 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@ELI2011 wrote:

I create a sample code that cycle write large size string to front panel indicator on Chinese XP and English XP, it can duplicate this issue.

Then please show us your latest sample code. Thanks.

You still haven not give uns anything quatitiative. "slowly" can have many meanings! Can you give actual benchmark times for the two scenarios?

160kb is not large.

Could it be that just the front panel more sluggish? Do you have overlapping elements?