- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

LabVIEW 2019 "Memory is full"- The top-level VI was stopped at SelfRefNode on the block diagram

06-02-2020 02:38 PM - edited 06-02-2020 02:50 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello,

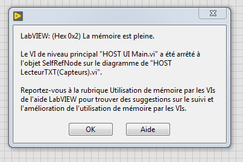

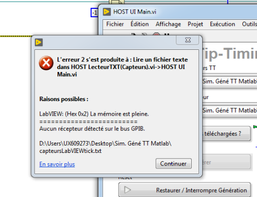

i am having a memory usage problem with my .vi and i still couldn't come up with a solution to it. Three dialog boxes show up when there is an error in this order, respectively (ps.: sorry for it being in french, in this thread you can see a photo of the exact same error but in english https://forums.ni.com/t5/LabVIEW/The-top-level-VI-was-stopped-at-SelfRefNode-on-the-block-diagram/m-...)

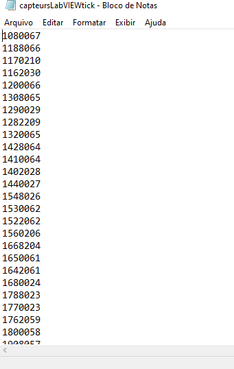

This problem (SelfRefNode "no memory" issue ) is probably due to the size of the array being concatanated array in the consumer loop (see .vi's block diagram photo attachedbelow). Thing is that for the code debugging i am reading a large .txt (~80million lines that will be converted to U32 elements,.txt file size of 800mb - and for the vi's standard operational conditions, i would like to read even larger arrays/.txt...). The txt is just a big array of integers (photo of its format below).

But it seems, just when the while loop has read all the data, converted it and is about to step out of the loop, i get those error messages.

Some infos that might be important :

-As the VI starts, the exact final array size is not known, so i cannot prefix a number of iterations to "pre-allocate" the needed memory

- As shown in the attached block diagram, i read string lines (since it is a .txt file) and convert it to U32 (needed representation)

-The maximum amount of characters of each line is 10

-All this data is to be contained in wires ( i dont need to output it in indicators whatsoever), since it is to be sent to the FPGA through FIFOS.

-I read the data from a subVI (i already tried to read it from the toplevel vi, but the error persists)

- With .txt of ~250-300mb or less my vi runs smoothly

Any ideas or questions are more than welcome 😄

Best regards,

Marra de Lima, Lucas

06-02-2020 03:35 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Please do not attach pictures of LabVIEW code (would you like to see a "picture" of a C++ or Matlab program to help debug it?). Please attach the VI itself, so we can examine it, see all the Case sections, even try to run it.

Bob Schor

06-02-2020 03:55 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks for the advice Bob Schor! I am attaching the .vi here...

06-02-2020 04:03 PM - edited 06-02-2020 04:10 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I already offered some suggestions in my previous post and you partially answered.

Yes, we actually need to see your VI, not just a picture.

Your diagram is overly complicated, bouncing data between parallel loops for no reason. What were you thinking? It is very likely that your enqueue loop runs significantly faster than the dequeue loop, making the queue grow without bounds. Why can't you parse the lines in one loop? No queue needed. I am also not sure why you cant read significantly larger sections of the file at once? (Currently you are trying to fill a swimming pool using an eye dropper. :D)

There are probably much better ways to read a formatted file with a single integer column.

So you have:

- a potentially gigantic queue

- an evergrowing data structure in the concatenating tunnel

- copying a huge U32 array into the cluster after coercion to DBL (2x the size!)

- etc.

Not good!

06-02-2020 04:05 PM - edited 06-02-2020 04:07 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@lucaseduardoml wrote:

Thanks for the advice Bob Schor! I am attaching the .vi here...

Thanks for the code. There is a missing type definition and a missing subVI. We probably also want a short file with typical data.

What is the purpose of the data once you have it? What other processing needs to be don on it?

Apparently, this is a subVI. Make sure to disable debugging and keep the front panel closed when calling. No need to update the indicators containing tons of data..

06-03-2020 03:52 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hey altenbach, first of all, thank you for the support. I will try to answer your points separately.

"I already offered some suggestions in my previous post and you partially answered."

Could you point out exactly what suggestions i didn't answer with my post?

"Your diagram is overly complicated, bouncing data between parallel loops for no reason. What were you thinking? It is very likely that your enqueue loop runs significantly faster than the dequeue loop, making the queue grow without bounds. Why can't you parse the lines in one loop? No queue needed."

Like i have said before, i made a bunch of modifications and one of them were exactly whis. Actually my first try was pretty simple and exactly what you mentioned: just a loop (no producer consumer design). This last "try"/"version" of the code, which i attached to the post, was just me trying to follow this NI article https://knowledge.ni.com/KnowledgeArticleDetails?id=kA00Z0000015CQ0SAM&l=fr-FR . As you said, i also thought this approach would rather worsen things , since the producer-consumer design creates a buffer and all this stuff... But i still tried it to see/confirm the result.

"I am also not sure why you cant read significantly larger sections of the file at once? (Currently you are trying to fill a swimming pool using an eye dropper. :D)"

The 4 constant was just the last try i made xD, actually i tried/tested reading different sizes of sections ( with the two different tested designs, the producer consumer one and the simple loop ) to see if that could somehow overcome any possible memory fragmentation problem . All in all, i already tried to fill the swimming pool with an eye dropper, a glass, a bucket and another smaller swimming pool XD and i still got the problem.

"There are probably much better ways to read a formatted file with a single integer column."

Do you have any in mind?

"copying a huge U32 array into the cluster after coercion to DBL (2x the size!)"

I already tried using the function "Decimal String to number" that converts the string directly to U32 and also putting a default U32 value to the "Scan from String" function for a direct U32 output. But the problem was still there...

06-03-2020 04:03 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I am sorry about the missing files, i will be uploading it here with a small sized file with typical data

"What is the purpose of the data once you have it? What other processing needs to be don on it?"

It will be then sent to the FPGA( i am using a cRIO 9024 chassis 9111 for this project) through a FIFO . Since the reading/consuming frequency will be variable in my FPGA (it will depend on the values in the .txt), i have to have all this data avaiable in the HOST so i can parse them to the FPGA according to its "demand". In the end, i wont be doing any afterwards processing on this big array, i will just send it to the FPGA.