- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to correctly set relative Waveform Chart/Graph time to show TRUE seconds?

03-02-2021 11:23 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello everyone,

I'm quite new to LabVIEW and I have read a lot of topics, watched YouTube videos, searched examples, but I still can't find solution so I need your help now. I am trying to plot a simple numeric floating-point data in Waveform Chart. I set time on X-axis to relative, but seconds on this axis are passing incorrectly - too slow or too fast, depending on my loop iteration time. I am trying to manually adjust X-axis scale multiplier to match seconds, but I know this won't fix the problem. How to make time on Waveform Chart X-axis go correctly and not dependent on my code execution time/sampling rate, etc.? Sorry, I can't upload .Vi because it's an one big mess. If you didn't understand my problem, I could make simple simulation .Vi

Thank you

03-02-2021 11:35 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Build your "simple numeric floating point" into a waveform where you have a T0 value of the current time.

Is your data regularly sampled?

You can set the the T0 and dT for a particular plot of a chart through a property node.

03-02-2021 11:55 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

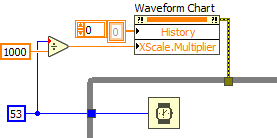

Sorry if ''simple numeric floating-point data'' looked funny to you. I attached example. If you run program, you see that seconds pass slower. I don't know how to build waveform since it's Y input is array, and my data is numeric floating-point. And I don't know sample rate of my sensors, also I can't predict my code execution time as it depends on computer CPU, memory and other factors.

03-02-2021 01:14 PM - edited 03-02-2021 01:15 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

If the loop rate is constant, you can do the following.

If the loop rate is variable during the run, more code is needed because charts and graphs assume constant spacing.

If you read your sensor one point at a time (software timed), just define a sufficiently slow constant loop rate and the spacing will be constant.