I dabbled with this problem a bit and your speed (1080 byte inputs, 1000 packets/sec) should be achievable.

I first did a brute force method based on rotate-with-carry that processed the original data and produced the 18-bit samples literally 1 bit at a time. That was able to handle approximately 2.5 million input bytes/sec, more than 2x your target rate.

I then tried another approach that would support For Loop auto-indexing. Again, it was pretty crude and brute force otherwise, but that sped things up to over 20 million input bytes/sec.

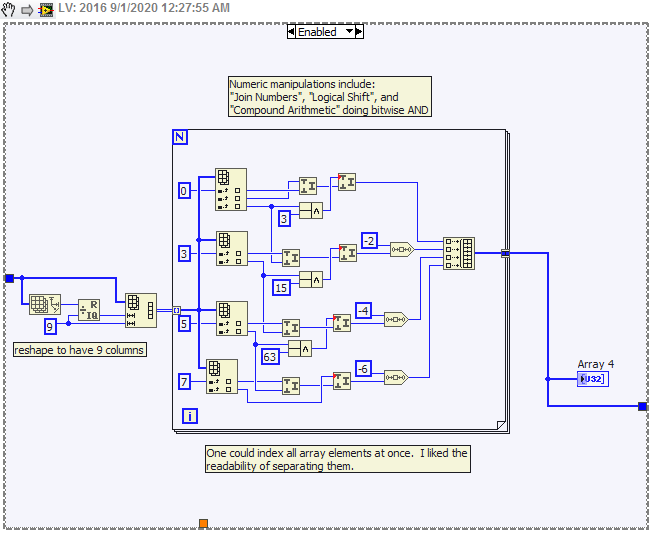

The main idea was to recognize that every set of 9 input bytes yields 4 output samples. So you'd reshaped your 1D array of 1080 bytes into a 2D array with 120 x 9 dimension. Then you can auto-index on the rows in a For Loop. Each loop iteration will operate on 9 input bytes, and process them to yield 4 18-bit samples.

Finally, I tried to enable parallelism on the For Loop, hoping for another speed boost. But it actually turned out to hurt. Probably something about the way I accumulate the array of 18-bit samples. I really didn't investigate any further.

There's a snippet below to illustrate. Note: I threw it together quick & crude, and only briefly checked for accuracy. I expect it can be sped up more, but I also figured 20 million bytes/sec on a nearly 10 year old desktop would serve well enough as proof of concept since you'll only need to handle ~1 million bytes/sec.

-Kevin P

CAUTION! New LabVIEW adopters -- it's too late for me, but you *can* save yourself. The new subscription policy for LabVIEW puts NI's hand in your wallet for the rest of your working life. Are you sure you're *that* dedicated to LabVIEW? (Summary of my reasons in this post, part of a voluminous thread of mostly complaints starting here).