- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

[FPGA] Tick Wait vs Discrete Delay not adding up

05-28-2015 11:35 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Foreshadow20 wrote:

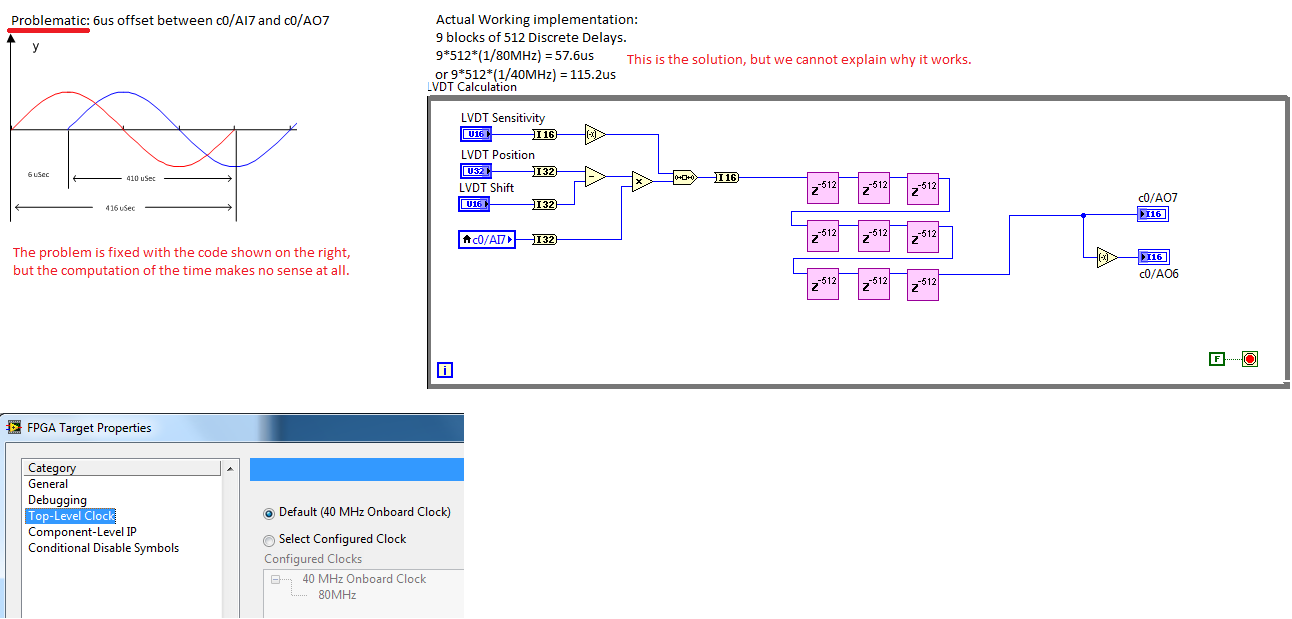

How can I know what the code is running at? I assume that it is based on the derived 80MHz that is shown in the project. How does 9 X 512 get aprox 410usec?

The clock period is not the same as the while loop period, unless you use a single-cycle timed loop as others have suggested. When you use a normal while loop, you're allowing the compiler to determine how many clock cycles each loop cycle requires.

ToeCutter and crossrulz already explained that your while loop takes 7 cycles (of the 80Mhz clock) per iteration, which results in the math making sense.

You are also potentially making your output signal less smooth than the input. I don't know how many clock cycles are required on your hardware to sample an analog input and to write an analog output, but if your loop runs slower than that, then you are introducing stair-steps into your signal where it holds the same output for longer than necessary. This is the problem with your "intended implementation" - it reads one sample, immediately writes that same value out, then waits 404 ticks before repeating. During that wait, no new data is read and the output stays constant.

05-28-2015 01:37 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

You are reading in a signal from a device via ADC and then want to output a time-delayed signal where the overall delay is 6 us, right? So your FPGA is simply a programmable delay circuit in this case? Have I understood this correctly?

We want both signals to be synchronized without any offset. So the total offset of the generated sine should be 410us or so i order to be synchronized. But how is 9*512 giving us that synchronization? There's something missing in the calculations.

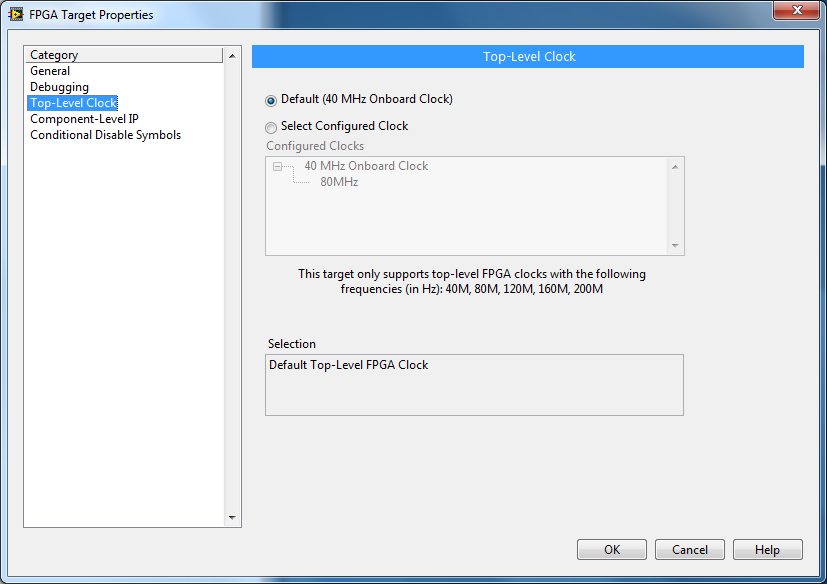

This is what I get when I check the Top-Level Clock. So everything runs at 40MHz clock?

If this is right, then 9*512*(1/40MHz) is 115.2us, still ~300us away from the synchronized signal.

Try outputting your AI [...] Looking at the result on an oscilloscope will give you the rate.

We cannot modify the code. We want to know why its working with the 9*512 discrete delays.

05-28-2015 01:42 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

(9*512+7) * (1/40MHz) = 115.375us still far from the ~410us that would be needed. Where is the 300us coming from? Did LabVIEW FPGA decided to run the loop at another clock sample.

Forget the 404 ticks, I can't modify the original post to remove it from my picture. The 404 ticks was the implementation before it got fixed with the 9*512 discrete delays.

But why is it working with only 9*512???

Thanks

05-28-2015 01:57 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

05-28-2015 03:07 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Foreshadow20 wrote:

(9*512+7) * (1/40MHz) = 115.375us still far from the ~410us that would be needed. Where is the 300us coming from? Did LabVIEW FPGA decided to run the loop at another clock sample.

Again, as ToeCutter wrote, the correct math is 9*512 delays * 7 clock cycles/delay (not +7) = 32556 clock cycles. Each clock cycle is 1/80Mhz, 32556/80000000 = 0.00040695 seconds, or 406.95us, which is very close to your target 410. The important thing to note here is that every iteration of the while loop is 7 cycles of the 80mhz clock. A while loop does NOT execute in a single clock cycle; that's what the single-cycle timed loop is for.

05-28-2015 04:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

05-28-2015 05:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Two questions. Did we ever resolve the 40 vs 80 top level clock? Can you show me how you got 7 ticks per while loop. It's clearly correct but I'd love to see which functions your counting..

05-28-2015 05:43 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I'm still not sure, but even if it is counted as 40MHz, it still works out to phase it since it becomes synchronised after 2 Sine cycles instead of 1.

What still boggles my mind is that the loop would be counted for each discrete delay... Why is LabVIEW FPGA discrete delays so badly programmed? It must be a nightmare to delay logic doors in LabVIEW.

05-28-2015 06:06 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Foreshadow20 wrote:

What still boggles my mind is that the loop would be counted for each discrete delay... Why is LabVIEW FPGA discrete delays so badly programmed? It must be a nightmare to delay logic doors in LabVIEW.

Sorry, I don't understand this comment at all. The delay is simply a shift register (or a series of them) - basically a queue where each loop cycle you remove the oldest element from the front and add a new one at the end. What did you expect this to do? Even though LabVIEW makes it easy, you're still programming an FPGA (direct to hardware) with limited resources.

05-28-2015 06:33 PM - edited 05-28-2015 06:34 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Oligarlicky wrote:

Two questions. Did we ever resolve the 40 vs 80 top level clock? Can you show me how you got 7 ticks per while loop. It's clearly correct but I'd love to see which functions your counting..

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5