- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

FPGA LVOOP Tidbit: Wait, what?

12-01-2016 08:28 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I'm writing this tidbit under the assumption that others, like my past self, have trouble visualising how LVOOP could work on an FPGA target. In fact, it's a really nice partnership and actually gracefully side-steps some of the misgivings I have of the current LVOOP implementation on other targets.

I'm also going to assume the readers have some experience in LVOOP, what it is and how it works including Inheritance, public and private methods and so on. If not, the rest of the post here won't make much sense I think.

Encapsulation (private / public):

Behaves exactly as it would on desktop targets.

Dynamic loading of plug-in classes at run-time:

No problem. Nah, of course I'm kidding. This is impossible on FPGA. This, together with the full hierarchy compile, makes LVOOP on FPGA a significantly different beast than on the desktop (Superior in many ways IMHO). For years I've longed for a similar compile method ont he desktop.....

Dynamic Dispatch:

For quite some time after I realised LVOOP worked on FPGA targets, I was pretty sure Dynamic Dispatch wouldn't work. Well it does, but a bit differently than on other targets. While FPGA code will happily execute Dynamic Dispatch code, the concrete implementation MUST be known at compile time. It takes a while to get your head around this. Every input to a node on the BD can accept only one concrete class type. If you try to wire up a Dynamic Dispatch function to the output of a "Select" node which chooses between two different related objects, the compilation will fail because the node "receiving" is being presented with two options where there can be only one.

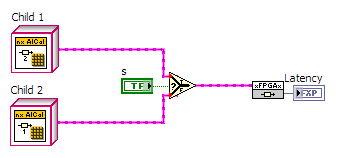

This code will not execute. The xFPGAx node can be presented with two different objects. LV throws an error: The LabVIEW class could not be statically resolved.

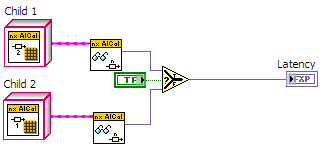

This code, however, will compile just fine. Each object has its own method and choosing between scalars is allowed.

In a similar manner, arrays with mixed object types are not a good idea on FPGA. Any array of objects will be cast to a single type and problems will ensue. Pack objects in clusters is required. Arrays of one type of an object are OK, just don't mix and match. This applies for "Index Array" as well as autoindexing on structures, output tunnels on Case Structures and so on.

So what benefit does this give us? Well, for me personally, I use objects to transfer data around my FPGA code. If I need a set of 50 FXP +-24,1 parameters, I create an abstract class with read, write functions and any other required methods. I can then implement concrete classes utilising FIFOs, Block RAM or Registers as I require. By passing the specific version I require to my code via wire or connector pane, I can modify how my code works without having to change anything in my existing code. This allows me to switch clock rates of my code without having to implement a sub-VI for each and every case. Simply wire up the "speed grade" of objects I require to do the pre-determined functionality and away we go.

Tip: I define a base class for ALL my FPGA objects with a method "Latency". In this VI I wire up a constant to an indicator (Abstract = 0 latency). As I implement different specific methods, I can then override this VI and the value for latency will be constant-folded into my FPGA code. Using this method, we can perform auto-balancing of critical code path latencies without any fabric overhead (see the latency macro contained in my previous post).

Inheritance:

As shown above, creating child classes is not a problem and switching out one class type for another in code is quite straight-forward once the guidelines outlined above are obeyed.

Caveats:

1) For reasons mostly unknown to me, some Objects cause the initial resource estimate to go completely mad. We regularly have code which reckons I'm using 160% of my registers only to pare it down to 55% during compilation. I know it has to do with some of our objects, but I've yet to narrow it down enough to make a definite statement on the precise effect.

2) You can't have an object on the FP of a top-level VI. I don't know why I'd want this particularly, but there you go.

3) Not FPGA specific but working with lots of Classes in LabVIEW can be tedious due to IDE slowdown.

4) A biggie: Dynamic Dispatch methods must be "Shared Clone" which for some reason doesn't work well with debugging in simulation mode. whenever I try to click my way down the VI hierarchy from my top-level FPGA Vi into some function in simulation mode, the Dynamic Dispatch VIs are unable to be debugged...... As if they are not running. Bummer.

5) Latency-balancing. It takes some brain-power to work out the relationships between the individual paths in the beginning but the reward is code whose inner workings can be modified as required without having to repeatedly do the same mental gynmastics re-balancing the pathways. In my opinion, this saves a huge amount of time when used in the investigative stages of development.

So that's it. I've probably missed a huge amount of information on the matter. Maybe someone somewhere in a fit of desperation might find the information useful.

01-12-2017 11:10 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

First of all congratulations on writing this post, you put serious toughts on this subject.

Just a few comments about the caveats:

1) The way LabVIEW computes the estimated resources is very strange, so far I have not found a compilation that would fit in the FPGA. It is always super estimated.

2) This is odd but true.

3) The relationship between LabVIEW and OOP is fuzzy, because LabVIEW never let go (it's loaded classes).

4) Unfortunately LabVIEW does not understands the OOP on the FPGA as the way we want, so it treats like a regular subVI.

5) True...

Congratulations again on your post.