- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

FGV in packed lib cause leak

Solved!03-18-2013 04:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

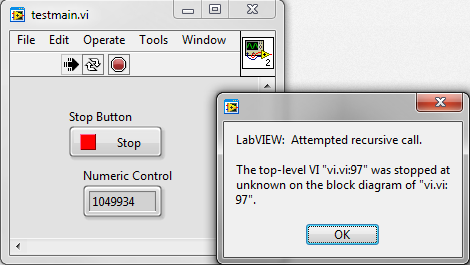

Since a update of a project from LV2009 to LV2011 there appeared a problem.

This is the message we got:

With the conversion we also converted the used LLB to an LVLIBP.

The problem led us to this LVLIBP and a dynamic call of a FGV in a VI owned by the LVLIBP.

The error is a bit misleading and strange, and always occure around 1.040.000+ iterations

We stripped the project down to a demonstration project.

Solved! Go to Solution.

03-19-2013 05:14 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

You know that your demonstration project hogs the CPU as well as memory?

Since i don't have LV 2011 installed anymore and you don't provide the sources of the packed library, i cannot run your demo.

But from the code, i wouldn't expect the lvlibp to be the issue, but the way you are calling the whole stuff.

Does your code in LV 2009 with the LLB in the exact same way of calling return a different error? Something like "Out of Memory"?

Norbert

----------------------------------------------------------------------------------------------------

CEO: What exactly is stopping us from doing this?

Expert: Geometry

Marketing Manager: Just ignore it.

03-19-2013 05:20 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks for your reply. We have currently new PC's with an i7 so 1 core at 100% doesn't matter me.

When someone has troubles with it, he can add a wait, but the time before the problem occurs will increase.

All source is included, the folder named "data buffer" includes the project and source files for the packed lib.

The old project in LV2009 and with the LLB doesn't return an error.

Bert

03-19-2013 05:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Bert,

the point is that your calls are configured to be reentrant and the subvi is configured to NOT share clones with previous instances. This will eat up your memory faster than anything else, depending on the content of the lvlibp-function.

So the settings of the call and the subvi seems to introduce the issue. Are you sure that your calling mechanics and reentrancy settings are really identical in LV 2009?

Norbert

----------------------------------------------------------------------------------------------------

CEO: What exactly is stopping us from doing this?

Expert: Geometry

Marketing Manager: Just ignore it.

03-19-2013 05:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

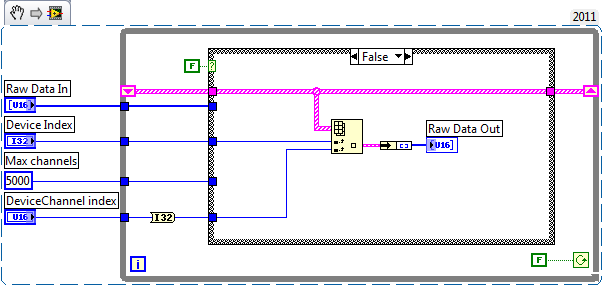

All there is in the lvlibp is this:

So I would expect the sub-VI runs once and is then removed from memory while "auto dispose ref" is true.

03-19-2013 08:40 AM - edited 03-19-2013 09:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Bert,

sorry, i haven't seen that you already attached the code for the lvlibp in your first zip already. So i rebuilt the lvlibp using LV 2012 and ran the demo.

I could reproduce the error you are seeing, also with about 1.048.500.

I find it very interesting that the number is quite close to 2^20 = 1.048.576. So i played around a little to see if it is connected to the options setting of the "Open VI Reference" function.

And i want you to step back a little together with me and think about what you are doing, what you are EXACTLY doing (despite the error message is indeed a little misleading):

You are using "Open VI Reference" with option 0x8, which will automatically create a new clone per call. The subVI has to be configured to be reentrant for this, but it does not matter if you have chosen "share clones" or "preallocate". In fact, this option equals "preallocate".

In your untimed loop, you create a new instance of the subVI as fast as possible. Since resources of the PC are limited (RAM, CPU cores and last, but not least, maximum number of threads) LV will use a limited amount of threads for execution of the clones. So your threads will get many clones they have to execute.

Here we need some basics regarding OSes:

The scheduler of current OSes is most likely using a scheduler working with a multilevel feedback queue. So each thread will be enqueued and executed by several performance key parameters.

Now what happens if you have more clones than threads?

Here is my explanation (without collecting feedback from other developers):

Since more clones have to be handled than threads can be spawned, LV will create an "execution queue" for the working threads. So each clone will be enqueued for execution. I would guess there is a single queue per thread, but multiple threads could be used. So all clones are distributed between different threads.

Now you have a non-reentrant subVI (FGV) in your reentrant called clones. This will force LV to lock all other clones until the current clone finished execution of the FGV. So essentially, you serialize execution of the clones by inserting a non-reentrant source.

The lock mechanism eats up a short time, adding additional overhead to the execution of the current clone.

Since you still enqueue new clones, you will overflow one of the queues which will return the error.

My main question on this is now:

Why would one ever want to have more than a very limited amount of clones (<1000) running concurrently if they have to share resources???

Conclussion:

You are correct that LV doesn't seem to handle the situation in a way that the user is pointed into a valuable direction to solve the issue.

But on the other hand, this situation is an artificially designed situation and should never appear in an user application. At least, i cannot think of any application requiring so many clones running concurrently but sharing their resources.

hope this helps,

Norbert

Edit: Stopping after spawning about 1000 clones takes about a minute overall until all clones are finished. So i had to wait more than 30s for all remaining enqueued clones to finish.

----------------------------------------------------------------------------------------------------

CEO: What exactly is stopping us from doing this?

Expert: Geometry

Marketing Manager: Just ignore it.

03-19-2013 09:33 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

One correction esp. regarding my previous Edit note:

This was when i changed the caller to use the more up to date mechanism to "call asynchronuous" into the subVI using the option 0x80 (fire&forget). The caller still must wait for the clones to finish before it goes idle.

With the classic "Run VI" method you are using, stopping the caller happens immediatly. But still, the clones are running. You can check by stopping the caller and trying to close LV. You will have to wait several minutes until all clones are finished and LV disappears. Most likely, you will rather use the Windows Task Manager to kill the LabVIEW.exe process.......

Again, this is something which should not happen in a user application (main window goes idle, but process stops several minutes in the future or has to be shut down manually by force).

Norbert

----------------------------------------------------------------------------------------------------

CEO: What exactly is stopping us from doing this?

Expert: Geometry

Marketing Manager: Just ignore it.

03-20-2013 07:43 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Norbert,

The problem you describe is more likely the issue reported in this topic:

http://forums.ni.com/t5/LabVIEW/Leak-bug-dynamic-call-VI-without-FP/m-p/2353588

Your hypothesis about the issue sounds worth a try. It's easy to check with a queue on which you put the iteration number after each other and in the sub-vi you remove one element of the queue. In your opinion the amount of iteration numbers on the queue will increase. This isn't the case.

I also don't have the issue of waiting before the program is finished (all sub-vi's ready), because there are no waiting sub-vi's when I stop the program.

Another test that tests your hypothesis is making the vi in the lvlibp reentrant. This also gives the same issue, no improvement, it's only slower.

The demo is not an artificial situation. It's extracted from a project running for several years at a customer. The speed of this demo is only exaggerated, the real project gives the problem after 1 hour which is not handy for testing. On the other hand, you suppose there are more than 1000 clones running, I think that's not the case and maybe less usual for common practice.

Were it is used for? It's a sort of webserver who get's incoming requests for posting data. For each request a task is spawned. A common approach I think.

Bert

03-26-2013 07:50 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The problem is still not solved, has anyone a clue?

04-02-2013 09:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello Bert,

Would it be possible to share the source code related to this test with us?

I also don't have LV 2011 on my laptop and my other one (desktop) is just being updated with LV 2012 SP1. (clean reinstall)

This will allow me to do some tests at my side.

Thanks!

Thierry C - CLA, CTA - Senior R&D Engineer (Former Support Engineer) - National Instruments

If someone helped you, let them know. Mark as solved and/or give a kudo. 😉