- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Delay LabVIEW FPGA, between producing an output using a c9263 and reading it back using a c9239

Solved!12-13-2016 03:59 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

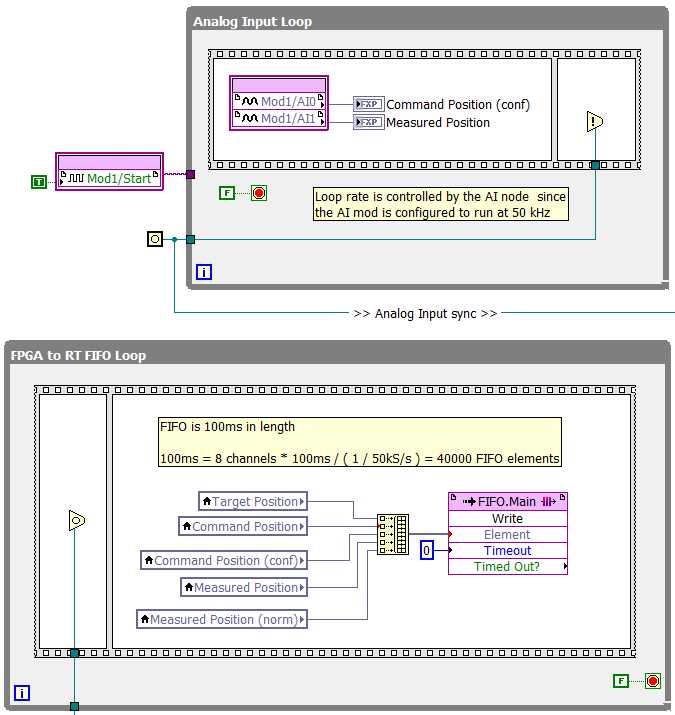

I'm working on an application which requires the output produced by a c9263 to be read back by a c9239 for confirmation. The c9239 is configured to run at 50 kS/s and while I expect to see a small delay between when the output is set and when the confirmation input shows that output change, right now it's about 850 microseconds which seems far longer than I'd expect. Is this a reasonable delay or does it sound like there is a code issue. Here is a screen shot of the relevant code for reference. Thanks.

I saw my father do some work on a car once as a kid and I asked him "How did you know how to do that?" He responded "I didn't, I had to figure it out."

Solved! Go to Solution.

12-13-2016 05:23 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The c9239 is a Sigma-Delta Digitizer and thus there is a phase delay going through it. You can look in the manual for the input delay. By my calculations the input delay is 771 microseconds for 50kSa/s, so your measured delay does not seem outrageous. Not sure what the other 100 us delay if from.

Cheers,

mcduff

12-13-2016 07:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

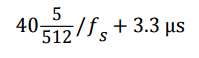

That's what I thought it was at first but the equation the manual gives...

... which I read as 40 * 5 / 512 / 50k + 3.3us worked out to 11.1125 microseconds. Am I not reading that correctly?

The 771 number makes sense as the 850 microseconds was just a quick estimate based off a data plot I was sent.

I saw my father do some work on a car once as a kid and I asked him "How did you know how to do that?" He responded "I didn't, I had to figure it out."

12-14-2016 10:35 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I had the same confusion as you. Here is the explanantion. (Note maybe I looked at a different set of specs as my numbers were a bit different.) It is 40 5/512 written as a fraction, that is, it is really 40.00977, not 40 times 5/512. Divide 40.00977 by 50000 you get 800us, that is, the unit of that division is in seconds not microseconds. Convert to microseconds and you will get your answer. Does that make sense?

mcduff

12-14-2016 10:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Ah I see, that makes perfect sense, is thanks

I saw my father do some work on a car once as a kid and I asked him "How did you know how to do that?" He responded "I didn't, I had to figure it out."