- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Data lost with TCP connexion (and big data)

Solved!09-12-2017 11:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello,

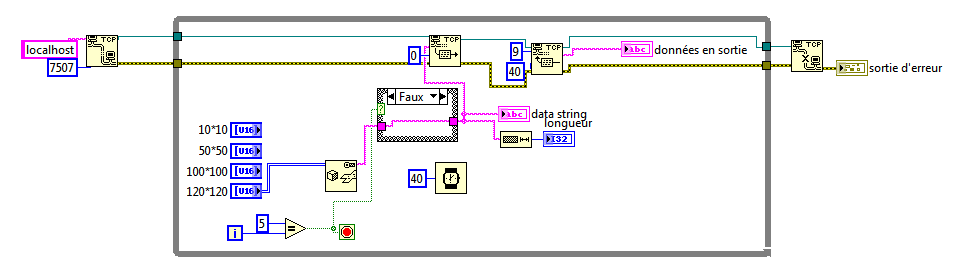

I am working on a project on which I have to transfer data from LabVIEW to Python. So Python create the server and launch the VI in two threads, and LabVIEW is the client which send data.

With the current VI, I can send data of a 100*100 table correctly, that is to say each message received in Python contained the entire message, the beginning is the size of the message, and the following the message itself.

But, as soon as I use bigger table (120*120 for example), I don't receive anymore the entire message. It is like this I am at the maximum size of the buffer of the TCP connexion. Is there a maximum size? If yes, do you know it?

Anybody can help me to understand why I am losing data juste changing the size of the table used ?

Thank you in advance

PS: I put the python file but you don't need to look at it. the problem is really in the VI I think.

Solved! Go to Solution.

09-12-2017 11:44 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Don't start a new topic on the same subject. Stick to the old one here.

(Mid-Level minion.)

My support system ensures that I don't look totally incompetent.

Proud to say that I've progressed beyond knowing just enough to be dangerous. I now know enough to know that I have no clue about anything at all.

Humble author of the CLAD Nugget.

09-12-2017 03:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Billko,

Thanks for pointing out that the question was, in fact, answered earlier today in another post. I'm guessing that possibly there is a language problem, and perhaps the original Poster didn't understand the response that suggested that the Original Poster was trying to send too much data in a single packet. A quick Web search suggests that the maximum size for a TCP/IP packet is 65535 bytes, but suggests that much smaller packets (less than 10KB) be used.

Bob Schor

09-12-2017 11:34 PM - edited 09-12-2017 11:37 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

There is no link between packet size and the size of the data you pass to the write primitive, at least none I am aware of. The TCP functions package up and transmit the data in whatever sizes make sense (or more likely, whatever sizes the OS takes).

09-20-2017 01:46 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I see a lot of people visited my page. The solution is on my other post (send after flattening into string and by pieces :

https://forums.ni.com/t5/LabVIEW/Send-big-data-via-network-communication/m-p/3687979#M1037030