- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Can typecast create error?

Solved!01-10-2020 07:53 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

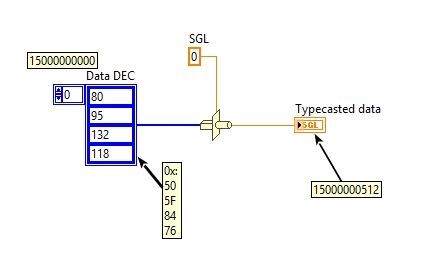

I am retrieving data from a database, consisting of an array of 4 bytes. The expected value from the database is 15000000000.

When I typecast the array to a single, somehow the value I end up with is 15000000512.

I have verified the data from the array by converting them to Hex (50 5F 84 76) and converted it using the online converter https://www.scadacore.com/tools/programming-calculators/online-hex-converter/ which works fine and returns the expected value of 1500000000.

Can someone help me to understand what goes wrong with the typecast, and how I can convert the data correctly.

Please see picture below:

Best regards

Karsten Jørgensen

Denmark

Solved! Go to Solution.

- Tags:

- typecast

01-10-2020 08:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

01-10-2020 08:40 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

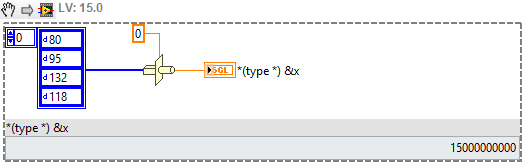

When I make that VI, I get 15000000000 as expected (not 1500000000, but that's probably a typo).

Either you looked wrong, or you have some hidden error in your code, that can't be spotted from an image.

Post the VI if you want us to have a look.

01-10-2020 08:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@cbutcher wrote:

I didn't experience the same thing using LabVIEW 2019 32-bit.

My numeric values are U8s. Is that the same for you?

I checked 16\32 bit integers, but that gives rubbish.

I used LV18 64-bit.

01-10-2020 08:50 AM - edited 01-10-2020 08:50 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

While SGLs are 32-bits wide, 8 of those bits are lost to the exponent, so once you get above 2^24 (roughly 7-digits), you can no longer represent every integer value. What you are seeing is the closest integer value that can be represented by a SGL, no errors. Different manifestation of the usual floating-point issues we see around here (large values instead of small).

Use a U32 or I32 instead and you will get the desired value, exactly.

01-10-2020 08:59 AM - edited 01-10-2020 09:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

[EDIT: Darin.K beat me to it]

------------------------------------------------------

I'm also seeing 15000000512 when using an array of U8's. LV2016.

Actually, I copied the SGL indicator, made it a control, and fiddled with incrementing. Sure enough, it looks like a SGL cannot exactly represent 15000000000, so it's stuck giving you one of the near neighbors it *CAN* represent.

-Kevin P

01-10-2020 09:10 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

In both 2013 and 2018 I get 15000000000.

A sgl cannot represent all values, no argument there.

But it can represent 15000000000 on my computer. This is pretty well defined in IEEE 754, and should be exactly the same on all computers that use IEEE 754 floating point numbers..

There must be a difference between how your setup and ours.

Can you try the snippet cbutcher posted?

01-10-2020 09:14 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The clever folks at NI try to insulate us from these strange effects with a little trick. The default Display Format of a SGL is 6 digits. You need to push that up to see the actual value.

01-10-2020 09:15 AM - edited 01-10-2020 09:16 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Never mind. Found the problem.

The default display format for a float is 6 significant digits.

So that means only 150000 is displayed, the other decimals are always 0. So it's displayed as 15000000000.

When switching to digits of precision, I get 15000000512.

As expected, but sloppy of the online calculator, that apparently also uses 6 significant digits.

EDIT: That crossed...

01-10-2020 09:18 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator