- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- « Previous

-

- 1

- 2

- Next »

Can't log data into multiple column *.csv format

Solved!

08-23-2019

08:26 AM

- last edited on

08-23-2019

12:45 PM

by

![]() pcerda

pcerda

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

So here's the deal. In the Beginning, NI created LabVIEW to help engineers get Data from hardware and write programs to acquire and analyze these data. The data often came from "instruments" (hardware) that had very accurate clocks and "did the sampling" very accurately, better than the PC could do (what with Windows multi-tasking and trying to "be the boss".

At an early stage, NI created the Waveform data type for sampled data. This had two Time parameters -- t0, the (very precise) TimeStamp when the first point was acquired, and dt, the (very accurate and precise) sampling interval that said how much time there was between samples. Note that with just these two numbers, you don't need to store all of the times data were sampled -- it is easy, fast, and (space-) efficient to compute these times "on the fly) (t0, t0+dt, t0+dt+dt, ... t0 + (n-1)*dt).

Just over a decade ago, NI came up with a much-improved way of programing hardware, DAQmx. About 5 years later, they invented the Dreaded DAQ Assistant (I suspect because DAQmx was too complicated for their Sales Reps to demo how easy it is to collect data with LabVIEW). They also invented the Dynamic Data Wire (don't get me started ...). I recommend not using either of these!

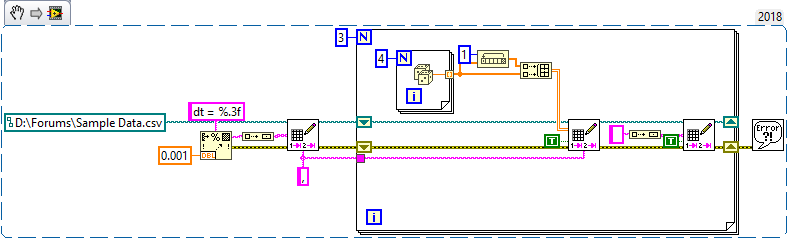

So here are some suggestions:

- Start your Spreadsheet with a "reminder", say a string "dt = 0.001"

- If you have 2 channels of N data points, write a 2D array without transpose, 2 rows, N columns.

- Write a blank row between such samples to distinguish one "chunk" of data from another. Note that when processing the data, you should be able to figure out when to "skip a row" (or when a row is empty, possibly all zeros).

Here's some code to try.

Bob Schor

08-23-2019 09:34 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Your explanation of t0 and dt is on point! Thanks for that.

I do enjoy your history lesson too. Keep being you Bob. You knights are freaking amazing.

- « Previous

-

- 1

- 2

- Next »