- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

ASCII to decimal conversion

08-20-2018 12:48 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello there! I have an SR860 lock-in amplifier, which returns ascii data packed as a 4-byte single precision binary block in little endian format. I am controlling it completely through VISA, since there is no official driver for this instrument. Is there a way to add in something to convert the data to decimal numbers? I have my VI set up to create a text file of the data. Although I can change the read buffer to show the data in hexadecimal form, the text file is still in ascii form. I've attached my VI and a text file of the data.

08-20-2018 01:14 PM - edited 08-20-2018 01:15 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

You are trying to read a data block. That block of data is not ASCII. It is binary data. I am seeing the common form for a block of data being sent over a SCPI protocol in the text file. The first character is a #. The second character is how many characters are in the length. The next X characters are the length. From the length value, you know how many bytes to read to get your data. Unfortunately, the data does not look correct to me at all. So I have a feeling there is something else happening or you did now tell us the right format of the data.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

08-20-2018 02:16 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The instrument manual says that the # piece is in the form #nccccxxxxxxx, where the n specifies the number of digits to follow the block length count, cccc is the n-digit integer size of the binary block to follow, and xxxxxxx is the cccc-byte binary data... packed in the format mentioned above. Should this tell me the type of data it is? And what is the image you included with the answer? Thanks for your help!

08-21-2018 08:36 AM - edited 08-21-2018 09:03 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Well, with the additional instructions on the format you provided, I was able to read one data block (which may have been "mis-sized", because there was 362 unread bytes at the end of the file, some of which read "Stanf<10 spaces>ord_Research_Systems,SR860,003279,V1.47<CR><LF>1.000000" (and that was the last of the file). If I counted it correctly, there may have been an additional 296 bytes (or 74 Sgls) of data that weren't counted in the 65536 Byte Count at the front of the file.

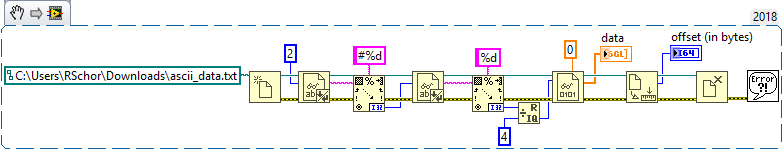

Here's the code I used, very similar to crossrulz, but I use Scan from String and read directly into Sgls (and get reasonable values).

Bob Schor

P.S. -- Oops, I goofed. My Read Binary Data is missing the Little-Endian switch. The data "looked good" when I viewed the numbers, but that was a "formatting trick" because I didn't see the "trailing exponential" which varied in an "unreasonable" way. Now the numbers start off with values around 10-6, but if you scan the entire array, there are still areas where it varies somewhat wildly.

Do you have any ideas what reasonable Sgl numbers should be in this file? [Oh, I should have mentioned that the way I got an Array of Sgl was to put a Sgl constant for the Type Specifier in the Binary Read and to specify reading multiple bytes. I apologize for not labeling the constant as "Sgl".]

08-21-2018 09:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@ab7643 wrote:

The instrument manual says that the # piece is in the form #nccccxxxxxxx, where the n specifies the number of digits to follow the block length count, cccc is the n-digit integer size of the binary block to follow, and xxxxxxx is the cccc-byte binary data... packed in the format mentioned above. Should this tell me the type of data it is? And what is the image you included with the answer?

Yes, that is what I described above. No, it does not say what the type of data actually is in the data block.

The image I included is a snippet of the code I quickly put together to read the file you attached. If you were using LabVIEW 2018 (which is the version I made the snippet in) or newer, you could save a copy of that image and drag it onto your block diagram and you magically have the code.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

08-21-2018 09:56 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Please note the flavor of "write" he was using that could be corrupting the file.

Maybe better to use a straight out write binary.

Ben

08-21-2018 10:25 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Ben wrote:

Please note the flavor of "write" he was using that could be corrupting the file.

Maybe better to use a straight out write binary.

Ben

Right, he has "Convert EOL" enabled. Disable it to avoid accidental conversion when encountering binary data that has the same value as an EOL character.

(Mid-Level minion.)

My support system ensures that I don't look totally incompetent.

Proud to say that I've progressed beyond knowing just enough to be dangerous. I now know enough to know that I have no clue about anything at all.

Humble author of the CLAD Nugget.

08-21-2018 01:41 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Ben wrote:

Please note the flavor of "write" he was using that could be corrupting the file.

Very nice catch! And there are a bunch of 0x0D0A in that file. So the value got converted quite a bit.

@Ben wrote: Maybe better to use a straight out write binary.

Just make sure the "prepend array or string size" is set to FALSE. Or you can just turn off the "Convert EOL" on the string write. works the same either way.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

08-21-2018 01:46 PM - edited 08-21-2018 01:49 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Using Ultra Edit, I replaced all of the 0x0D0A with 0x0D and got something that actually looks promising.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

08-21-2018 01:55 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

That looks like it was a classic case of "Garbage in Garbage out". Once you toss the trash it works as expected.

Ben