View Ideas...

Labels

Idea Statuses

- New 2,936

- In Development 0

- In Beta 1

- Declined 2,616

- Duplicate 698

- Completed 323

- Already Implemented 111

- Archived 0

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report to a Moderator

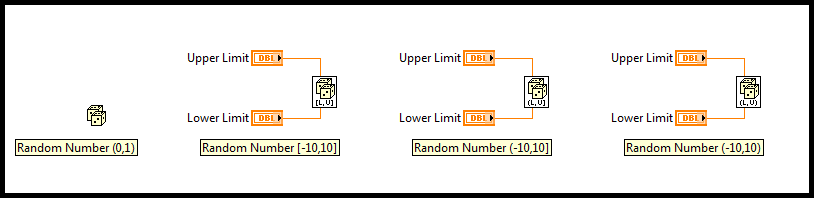

Random number function with option of including/excluding the endpoints

Submitted by

moderator1983

on

02-12-2014

10:06 AM

14 Comments (14 New)

moderator1983

on

02-12-2014

10:06 AM

14 Comments (14 New)

Status:

Declined

I have seen the request of Random number function improvement, however further to that, my wish is to have an option to include or exclude the endpoints (just like In Range and Coerce Function).

Labels:

14 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

National Instruments will not be implementing this idea. See the idea discussion thread for more information.”