View Ideas...

Labels

-

Analysis & Computation

305 -

Development & API

2 -

Development Tools

1 -

Execution & Performance

1,027 -

Feed management

1 -

HW Connectivity

115 -

Installation & Upgrade

267 -

Networking Communications

183 -

Package creation

1 -

Package distribution

1 -

Third party integration & APIs

290 -

UI & Usability

5,456 -

VeriStand

1

Idea Statuses

- New 3,058

- Under Consideration 4

- In Development 4

- In Beta 0

- Declined 2,640

- Duplicate 714

- Completed 336

- Already Implemented 114

- Archived 0

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report to a Moderator

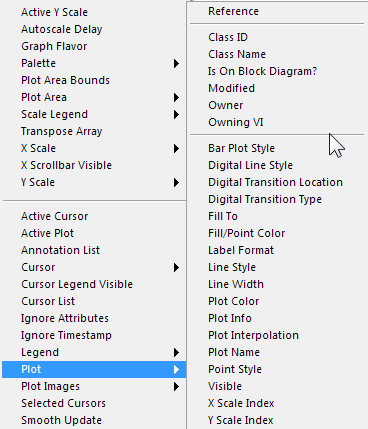

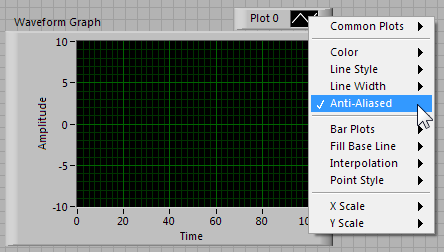

Programmatically set "Anti-Aliased" for graph plots

Submitted by

GregSands

on

06-10-2009

07:25 PM

9 Comments (9 New)

GregSands

on

06-10-2009

07:25 PM

9 Comments (9 New)

Status:

Completed

Available in LabVIEW 2012

Subject says it all.

Labels:

9 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.