- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

cRIO Poor Performance - Where have my MIPS gone?

10-16-2014 09:38 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I have a cRIO based system that is used to control a motor for a particular application. The application has been developed and enhanced over the years and is currently using about 50% of the CPU. The RT Controller is a cRIO-9012. I have recently been asked to add a 1 kHz (or more) loop function to the cRIO application. I can only achieve a maximum loop rate of about 200 Hz. When I told the customer this, he asked how fast my controller was, to which I replied 400 MHz. “Where is all the CPU power going?”, he asked. He's now thinking of replacing the cRIO Controller with an mbed with C code to get the performance he requires, which is a pity since I'd like to continue developing the application in LabVIEW.

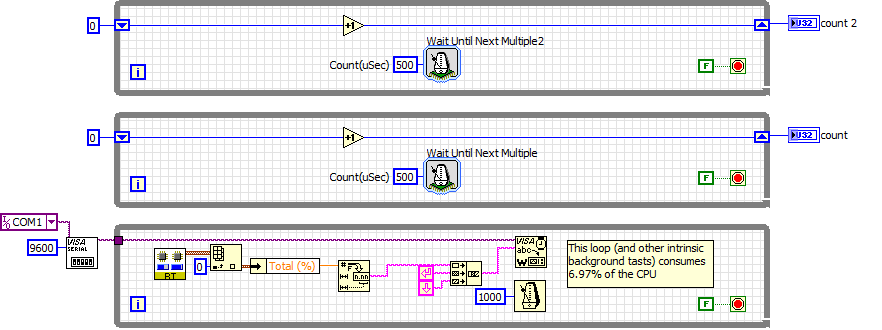

Following on from his question, “where is all the CPU power going?”, I decided to write a simple application to test the cRIO 9012's performance. Below is the code I used to perform the evaluation:

With just the bottom loop running, which reports CPU load over the cRIO Controller's serial port, I have a CPU load of 7.0%. This is the baseline.

I then add the "execution" loops as shown above the bottom loop, one at a time and recoded the CPU load. Here are the results:

1 Loops - 18.3% load (11.3% extra)

2 Loops - 29.4% load

3 Loops - 45.5% load

4 Loops - will not run!

I have two problems/concerns.

Concern 1

The cRIO 9012 has a 400 MHz processor, which has 760 MIPS of processing power. The rate of the simple loop is 2 kHz and each loop takes about 11% of the CPU power. That is, each loop uses up 83.6 MIPS and each loop iteration uses up 41,800 instruction cycles. Where are the 41,800 instructions going? Even if there was a context switch after each loop iteration, this would account for 150 to 200 instruction cycles. Each loop is only doing an integer increment, timing check, compare and branch. These should only take up about 4 instruction cycles (8 if you want to be generous). If this was programmed in C, you could get bare metal performance that allows a single loop rate of something like 40 MHz or with an RTOS something like 2 MHz. Instead, my maximum loop rate is something like 20 kHz.

Where are the "wasted" 41,600 instructions per loop iteration going? This is only 0.5% efficient!

Concern 2

Why does adding the 4th "execution" loop cause the application to halt (or at least not send data over the serial port)?

I like programming on the desktop using LabVIEW and I like programming the FPGA using LabVIEW. The RT Controller is however becoming an embarrassment. Is it really the case that the best additional loop rate I can add to an existing application that already uses 50% of the cRIO Controller's CPU can only be 200 Hz maximum?

10-17-2014 03:35 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Vitoi,

Have you considered using timed loops instead of while loops? That should give you faster loop rates.

Applications Engineer

National Instruments

10-17-2014 05:09 PM - edited 10-17-2014 05:10 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hey there vitoi,

The two pieces likely responsible for the behavior you see are the execution context of the "execution" loops and implementation behind Wait Until Next Multiple.

Execution Context

Every "execution" loop you place on the block diagram will likely execute within the same LV execution system. The Wait Until Next Multiple within each loop is configured with "same as caller" for its preferred execution system, which is what most subVIs are configured for. As such, it will use the same execution system as the top level VI that called it. There is a good possibility that your top level VI is also configured for the default "same as caller" and as such it will end up executing in "standard". Asking the standard execution system to handle Wait Until Next Multiple in 4+ simultaneous 2kHz loops is asking quite a bit considering how Wait Until Next Multiple is implemented (explained in next section). I'd recommend moving your independent loops into their own subVIs and then configuring those VIs to run in their own execution threads (execution system specification "other 1" and "other 2").

Wait Until Next Multiple

The Wait Until Next Multiple seems simple but actually has more calling overhead than one might think. It was designed with portability(multiple hardware platforms and clocks), flexibility(multiple counter units), and error handling in mind. It is more concerned with maintaining safe and accurate timing and not the lowest possible latency. As such, all of these considerations come at a cost (execution overhead). Typically this overhead is not noticable when compared to the execution time of other tasks in the loop but is certainly more noticable when it is executing as a standalone task in a high frequency loop. I would not expect the Wait Until Next Multiple to be the primary source for increased execution time within a loop.

However, if you are concerned with Wait Until Next Multiple overhead you can use the configured call library function node in my attached VI instead. This is the library called in the Wait Until Next Multiple configuration in your example. Its important to note that this library assumes you are on the only using microsecond inputs, you're executing on a VxWorks target, and will not handle any errors thrown by the library it calls (although those would be pretty unlikely to begin with). This solution will have less calling overhead, indeed, but will not be as robust as Wait Until Next Multiple is.

10-17-2014 06:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The thread swapping is most likely where you are running into issues. There is a really nice chart about the execution systems and timed loops and other processes in an RT system and also shows how many threads are allowed for each. This chart can be found the the CRIO Developer Guide. I think each execution system-priority combination is allowed 4 threads. So that is likely where your issues are coming from. If you change to Timed Loops, each one has a single thread and run at a higher priority than High Priority threads.

There are only two ways to tell somebody thanks: Kudos and Marked Solutions

Unofficial Forum Rules and Guidelines

"Not that we are sufficient in ourselves to claim anything as coming from us, but our sufficiency is from God" - 2 Corinthians 3:5

10-19-2014 10:49 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks for all the feedback.

MajorTom,

Changing to timed loops instead of while loops makes the performance worse. For 2 Loops, rather than a CPU load figure of 29.4% (22.4% after removing base load) it shoots up to 78.4% (71.4% after removing base load). That is, it runs about 3 times slower, which takes the "efficiency" down from 0.5% to 0.15% efficient.

TimothyA,

I tried making the "execution" loops subVIs (with Preferred Execution System = other 1, with the top level = other 2) and that solved the four "execution" loops problem. Thanks, one of my concerns is now resolved (I'll mark it as such once the conversation quietens down).

The execution time is still large with the 2 loops taking 29.1% of the CPU, which is the same as before.

I tried using the "reduced" us wait next multiple CLN.vi, but it appears to be in LabVIEW 2014 and I'm using LabVIEW 2013. Any chance of resaving it as LabVIEW 2013?

crossrulz,

Thank you for pointing me to the table in the CompactRIO Developer's Guide. I assume you’re talking about Figure 3.5. Priorities and Execution Systems available in LabVIEW Real-Time. I didn't realise that Execution Systems are limited in the number threads (would be nice to get a warning when this happens). This will make interesting reading and experimentation.

All,

I’ve tried various loop limiting rates including Timed Loops and While Loops with RT Wait Until Next Multiple, RT Wait, Wait Until Next Multiple and Wait and the best perform is from the two RT waits. The worst performance was from the Timed Loops.

In summary, I’ve solved the problem regarding how to run more parallel loops, but I still get very poor performance with each loop interaction taking about 41,800 instructions when it “should” take more about 200 instruction cycles. All I want is to be able to run a loop at at least 1 kHz on my cRIO-9012 when I already have an application that takes up about 50% of the CPU. I have the threat of the code being moved to an mbed using C, which I’m trying to resist. Surely a 400 MHz controller can have a 1 kHz loop and not take up more than 10% of the CPU.

10-20-2014 09:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hey vitoi,

Sorry for the delay. I've attached the 2013 version on this post.

I'm curious to see what performance you have for this implementation.

10-21-2014 07:32 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks TimothyA, I have trialled the provided call library function does improve performance.

With 2 Loops, 500 μs loop rate, using RT Wait Until Next Multiple, as previously reported, the CPU load is 22.4% (after allowing for the base load).

With 2 Loops, 500 μs loop rate, using the provided call library function, the CPU load is 9.75% (after allowing for the base load).

So a 2.3x improvement in performance.

The good news is that the performance is now 2.3x better, the bad news is that it should be possible to run such a simple loop at 500x (context switching RTOS) or 10,000x better (bare metal C code). So, I still wonder why each loop iteration takes 18,000 instruction cycles when it should take about 200(context switching RTOS) or 10 (bare metal C code). Why is it running at about 1% efficiency compared to an RTOS C code?

The status so far:

1) I know why five parallel loops will not run and how to fix it (Thanks TimothyA and crozzrulz)

2) Where are my MIPS going? It takes about 18,000 instruction cycles per loop iteration when it "should" only take about 200. This limits the maximum simple-loop rate rate to 22 kHz, when the 400 MHz processor should be able to achieve a 4,000 kHz simple-loop rate.

10-21-2014 07:40 PM - edited 10-21-2014 07:42 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

10-28-2014 11:45 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Looks like this topic has quietened down so I thought I'd provide a summary. (Additional information for this puzzle is still welcome.)

The poor cRIO performance was observed in a previous post by myself about 2.5 years ago: https://forums.ni.com/t5/LabVIEW-Embedded/LabVIEW-Embedded-Performance-Testing-Different-Platforms/t...

At that stage, it was identified that the cRIO-9012 was about 20% efficient for a simple application. It would appear that the main culprit is the loop (whether it is a For Loop or a Timed Loop). As observed here, for the simplest of loops, efficiency can be as low as 0.5%.

The cRIO-9012 has a 400 MHz processor that is capable of 760 MIPS. For a 760 MIPS processor, we would expect for the very simple loop in question, to perform as follows:

1) No operating system C code: 76 MHz loop rate (100%)

2) RTOS C code: 3.8 MHz (5%)

3) LabVIEW: 22 kHz (0.03% relative to 1 and 0.6% relative to 2)

Given that we are running an RTOS and that there are many benefits in using an RTOS, the fair comparison would be 3 relative to 2.

The question that remains is, why is LabVIEW so inefficient? It's quiet sad to think that a very simple loop on a 760 MIPS processor can only run at 22 kHz (50 kHz with the call library wait) when 3.8 MHz should be possible. Fortunately, for more intensive loops the inefficiency is not so poor, however it appears that we still pay a roughly 5x slower penalty for running LabVIEW relative to C programming, at least on an embedded platform. NI, is there room for improvement?

- Tags:

- cRIO performance

12-12-2014 06:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

When you said that changing to timed loops decreased the efficiency I take it that you removed the "wait until next multiple" considering that the loop is taking over the timing?