- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Survey: LabVIEW Embedded experiences

02-16-2012 03:59 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks Wouter,

Much appreciated. There's a wealth of information here.

Firstly, both the LM3S8962 and the LPC1788 have ARM Cortex M3 cores, so no difference here. The LM3S8962 can have a clock rate up to 50 MHz and in the case of the development board in question runs at 50 MHz. The LPC1788 can have a clock rate up to 120 MHz, however I'm not sure what the micro's clock rate is for your board, but it should be at or close to 100 MHz. The performance figures suggest otherwise. Both board types use a 12 MHz crystal, but this is multiplied up utilising a PLL. Perhaps you could contact Embedded Artists to ask them how fast the LPC1788 is being clocked.

We now know a few things.

1) For the LM3S8962 board, using parallel looks robs 81% of the CPU processing power, due to LabVIEW/OS overhead.

2) For the LPC1788 board, using parallel looks robs 89% of the CPU processing power, due to LabVIEW/OS overhead.

3) LabVIEW Embedded 2010 is faster than LabVIEW Embedded 2011.

4) National Instruments have only have two Tier 1 boards and these are now over 5 years old

So, using LabVIEW Embedded and conventional LabVIEW programming (that is, parallel loops) consumes about 80 to 90% of the processing power. Given the significant effect of changing from LabVIEW 2011 to LabVIEW 2010, a lot of this may be due to LabVIEW.

Not a pretty picture for LabVIEW Embedded.

Regards,

Vito

02-16-2012 04:12 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Correction: The EK-LM3S8962 Development Board uses a 6 MHz crystal, suggesting the LPC1788 Development Board is running at 100 MHz. But this is not reflected in the processor performance!!!!!!!!!

Also, please change two occurrences of looks to loops ![]()

02-16-2012 06:24 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

second test:

I have configured the lpc1788 target to use external RAM, LabVIEW used this external RAM in the first test. which is slower.

Now with usage of internal RAM.

LPC1788

test 1:

82.938 ms

test 2:

467.386 ms and 558.201 ms

"LabVIEW for ARM guru and bug destroyer"

02-16-2012 11:41 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Wouter,

Thanks for your test results. It has really opened my eyes regarding LabVIEW Embedded.

The biggest question mark at the moment is the core CPU clock speed for your LPC1788 board. I've been thinking about how to find this out, other than toggling a pin and looking at it on an oscilloscope. If you run Test 1 and Test 2 below and report the test times, we can work out the core CPU clock rate. I've also attached the project in LabVIEW 2010 for you.

Regards,

Vito

02-17-2012 03:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

LPC1788

test 1: 75.247 ms

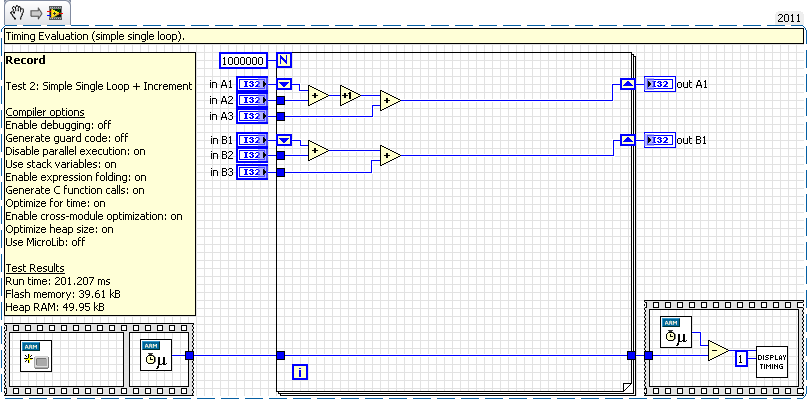

test 2: 83.610 ms

181.086 / 75.247 = 2.40

201.207 / 83.610 = 2.40

why the 2.40?

Cortex-M3 same MIPS

120 MHz / 50 MHz = 2.40

"LabVIEW for ARM guru and bug destroyer"

02-17-2012 03:53 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Well Wouter, your LP1788 is definitely operating at 120 MHz.

This is shown both by the ratio calculation you did and also the time difference between Test 1 and Test 2. This time difference is the time to execute one instruction per loop. 83.610 - 75.247 = 8.363 ms for 1,000 instructions --> 8.363 us for an instruction --> 119.6 MHz.

The question that is begging to be answered is why is it that the LPC1788 running at 120 MHz is only able to execute the earlier more extensive tests in 82.938 ms for the single loop and 558.201 ms for the dual loop tests. You should have achieved 55.890 ms and 299.240 ms execution times. On top of being robbed 85% of the CPU processing power going from single to dual loop, there's another 46% robbed by going from a 50 MHz to 120 MHz ARM Cortex M3 processor.

That's 92% of the available computing resources of a LPC1788 lost if programming in LabVIEW Embedded. A C programmer with an 8 bit PIC could run rings round a LabVIEW Embedded programmer using an ARM Cortex M3 running at 120 MHz. This is infuriating!

My work colleague and I have tried to give LabVIEW Embedded for ARM every opportunity. We've tried everything to prop it up. It’s a pity I can’t leverage my LabVIEW Desktop skills in the embedded world.

NI, is there anything you can do to make LabVIEW Embedded viable? The community awaits...

02-22-2013 05:05 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

... and waits ...

02-25-2013 03:18 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

LV for ARM is not supported anymore (since 1,5/2 years), no more features, only some bugfixes shall be done..

"LabVIEW for ARM guru and bug destroyer"

02-25-2013 05:56 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Wouter, if LabVIEW for ARM is being phased out, you'll have to look for a new tag line other than "LabVIEW for ARM guru and bug destroyer". ![]()

02-26-2013 01:50 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

haha, LV for ARM is still hot (for me).

At this moment, I think there's enough functionality in LV for ARM, but there are still some bugs in it.

some of them that I've found (with a solution, see forum) are still not fixed.. (or without any answer from NI)

"LabVIEW for ARM guru and bug destroyer"