- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

XNET: Make session react to certain special Frame

05-04-2021 07:31 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello,

Im looking for a way to make an output (Single point preferably) session write all its frames when a certain trigger frame reaches the interface. I know that I could do this with software but because of timming reasons software timming is just not reliable enough... The trigger frame is not an RTR it is a normal frame.

Is this posible?

Thanks

-A

Projektingenieur

Restbust, Simulations and HiL development

Custom Device Developer

05-04-2021 11:58 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The way I would do this is in software timing. You said you don't think it would be good enough but what kind of response are you looking for? I'd create the sessions ready to go, one for a Streamed In, and then the Single Point out. On the Read you can specify that you wait for a timeout for a single frame to come in. So you will sit there waiting for that single frame, and then once it does (meaning no timeout) perform the write. For UDS, XCP, KWP2000, and a few other protocols, you need to respond quickly enough, and using this technique has worked for me in the past on Windows and RT.

Unofficial Forum Rules and Guidelines

Get going with G! - LabVIEW Wiki.

16 Part Blog on Automotive CAN bus. - Hooovahh - LabVIEW Overlord

05-05-2021 01:40 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello,

Thanks for the reply. would need the interface to start writing the frames in max 0,5ms after the trigger frame is written in the bus more that that is just not acceptable (Customer propetary CAN protocol) you think it will be fast enough? (PXI 8840QC + Linux RT) I already tried to implement something like that it worked but I had a 1,5-2ms response time. Maybe my implementation was just not good enough. also: Arbitration is not a problem here, the bus is empty.

Projektingenieur

Restbust, Simulations and HiL development

Custom Device Developer

05-05-2021 11:01 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I would expect the response time from seeing the frame, to the new one going out to be on the order of 10ms on an RT system or less, maybe a bit longer on Windows, but honestly not by much. Are you saying you need a frame to go out in 0.5ms? 500 microseconds?

If so I don't think that can be consistently possible, even on an embedded microcontroller. The time to get the data to be sent, and then send it to the transceiver, will likely take that much time. That would mean even if a hardware solution was found you wouldn't be able to get under 500 microseconds.

Unofficial Forum Rules and Guidelines

Get going with G! - LabVIEW Wiki.

16 Part Blog on Automotive CAN bus. - Hooovahh - LabVIEW Overlord

05-06-2021 02:48 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

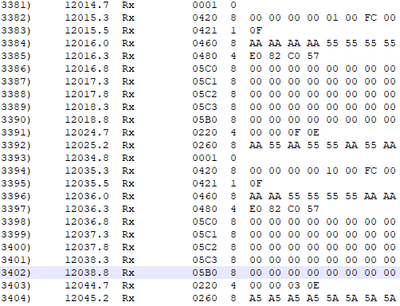

Just to give a bit more of context: I am developing a Custom Device, which using XNET simulates all the CAN sensors. This a real trace: Timestamp in milisecons.

They got special hardware that react extremly fast to the trigger frame (0001) in about 0,5ms, as you can see in the trace. And I have the pleasure of trying to simulate this. Also, tho the trigger comes ever 20ms, the CAN sensors are only allowed to write in a (Very)short spam of time. I also do not need to capture the data exactly after the trigger, I can prepare the frames a bit before and send it to the transceiver (That is the reason I use single-point sessions!) I just need to tell the tranceiver to send the info that it already has after the trigger arrives,

The trigger comes every ~20m but not really... It is more like 19.999ms and if I start a cyclical session with XNET the trigger and the session start wandering apart from each other after a short while.

I actually have a working solution which allows me to sent the frames with 0,2ms delay very consistenly but I'm struggling with the 500 Frames limit and memory issues.

Projektingenieur

Restbust, Simulations and HiL development

Custom Device Developer