- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to improve my video processing?

11-12-2014 12:32 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello!

Firstly, I am new in LabView and sorry for my English...

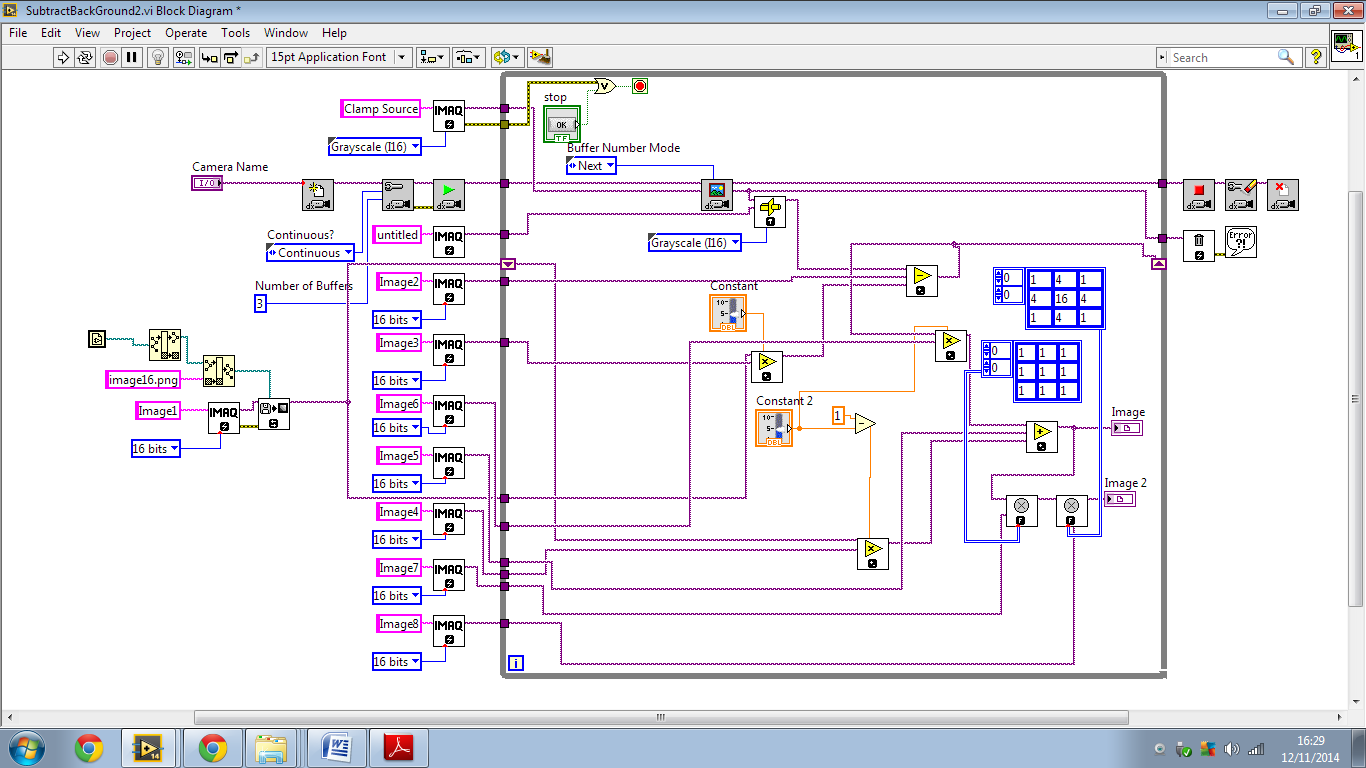

I am working on project at my university about optical tweezers, thus I need to show to a normal user of my final program a clean and treated image, to do this I am subtracting a background (image16.png) already obtained of the current video and applying filters (for now, just a median filter and a gaussian filter to smooth the image), but when I do this the speed of my video decreases. There is a better way to do what I want?

I attached my program below.

The cam is a USB Logitech HD Webcam C270(CCD)

thanks!

11-13-2014 05:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Dear guiarreche,

In a quick overview of your project, I could realize you are using so many processes in parallel. This could be compromising the quality of your image and processing.

Try to reorganize the structure of your project in the format of state machine based on series transitions. Also, if you do not want to reorganize all your code, you can disable some parts and analyse how much it part is consuming.

gfavaron

11-17-2014 12:08 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi gfavaron,

Thanks for replying. Do you have some example about format of state machine based on series transitions? I realized that filters is consuming more.

guiarreche

11-18-2014 05:13 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi guiarreche,

You can check this explanation out: http://www.ni.com/white-paper/2926/en/

or this example: http://www.ni.com/white-paper/7604/en/

You will find more examples searching in ni.com.

I hope I could help you a little bit.

gfavaron