- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

labview 2010 FPGA: problem with mean-variance subvi

Solved!08-04-2011 11:49 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The subvi "Mean,Variance and Standard Deviation" seems not to work correctly. The calculation of the mean value is ok but std and variance are wrong and the results change with the representation in a unexpected way. Has anyone experienced such problem? Is there any issue with the use of this function?

Thanks

Andrea

Solved! Go to Solution.

08-04-2011 04:15 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Andrea,

I can't find any reports of incorrect behavior with that node. Please attach an example showing the incorrect behavior and we can verify if there's a problem.

Jim

08-05-2011 02:21 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Dear Jim,

thanks for your reply.

In attachment you find a simple example showing this apparent strange behavior.

Andrea

08-05-2011 05:14 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Andrea,

Thanks for pointing this out. There is indeed an internal overflow problem, which I've reported as a high priority CAR 222056. In the meantime I've converted your node to a subVI and have implemented a fix specific to this particular configuration along with some comments to help explain the changes in case you need to modify it. I'm still testing it and will post something for you on Monday.

Sorry for the inconvenience, and thanks again for bringing this to our attention!

Jim

08-08-2011 02:56 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Jim,

I am looking forward to see and use your fix!![]()

Many thanks

Andrea

P.S. I suppose there's the same problem for the 2011 Labview version, correct?

08-08-2011 09:58 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Andrea,

That's correct, it uses the same code in 2011. FYI, the problem shows up when the variance is relatively small, and a subtraction that is always supposed to give a result greater than or equal to zero produces a negative number due to roundoff error. The fix increases the internal precision to avoid this problem, and will also allow you to customize it for however many bits of output precision you want to keep around for downstream operations.

Jim

08-08-2011 12:33 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Andrea,

Here's a new subVI and test for the configuration in your VI (16 samples, I16 input), saved in LabVIEW 2010 SP1. It's fairly straightforward to adapt it to other configurations, but it does involve reconfiguring several nodes and constants on the diagram. Let me know if this will work for you.

Regards,

Jim

08-11-2011 09:08 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi Jim,

your solution works fine. Thank you very much.

However I have noted that:

1) this new version uses 3 DSP48 units compared to the 2 DSP48 units of the built-in version (I had to modify my application to fit into the FPGA).

2) The number of samples cannot be easily changed to a value different than a power of two. What is a possible efficient implementation of a division by an integer number? Do you have an example?

Andrea

08-11-2011 09:28 AM - edited 08-11-2011 09:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@JLewis wrote:

Hi Andrea,

Here's a new subVI and test for the configuration in your VI (16 samples, I16 input), saved in LabVIEW 2010 SP1. It's fairly straightforward to adapt it to other configurations, but it does involve reconfiguring several nodes and constants on the diagram. Let me know if this will work for you.

Regards,

Jim

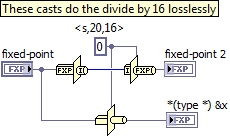

Jim, may I ask why you use double casts to divide by 16 and not a single type cast (see image)?

08-12-2011 09:57 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Jim, may I ask why you use double casts to divide by 16 and not a single type cast (see image)?

Sure. The Type Cast works fine on the desktop but is not supported on FPGA, due to a number of odd behaviors that don't make as much sense in hardware.

Jim