- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

array subset truncating end of 2d array

Solved!11-04-2015 01:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

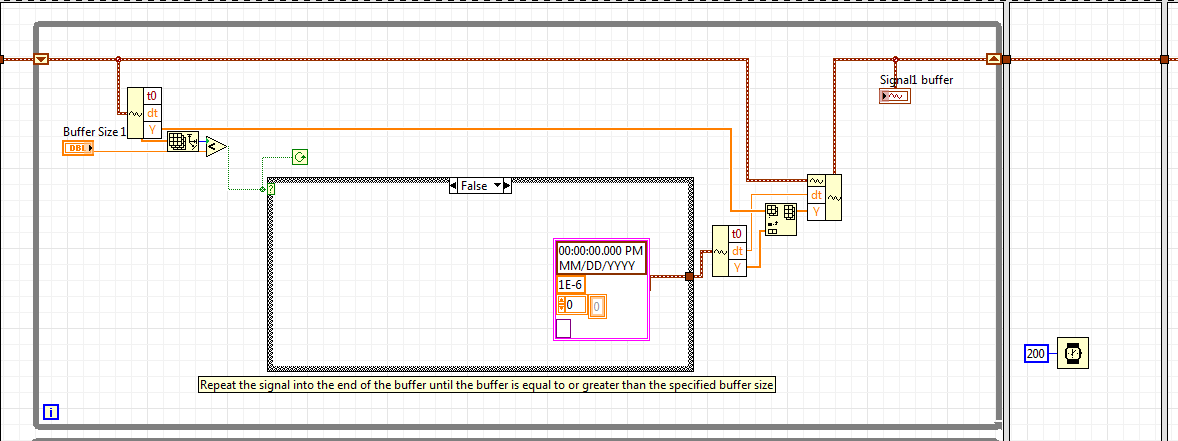

The intention was to make a program that would asynchronously generate multiple different signals into a buffer. Then, something would consume the buffer - a daq output, signal processing, etc. I created a dummy consumption that just takes 1% off the beginning of the buffer. Whenever the buffer gets below the specified size(s), more signal will be added to the end.

I ran into a problem where the Array subset function is truncating the end of the subset sometimes, so I stripped the program down until a bare minimum of code exists to cause the problem. It seems to be memory usage or allocation related. Maybe I'm doing something that I shouldn't, but it seems like a labview bug. In the block diagram, I have a note that shows a waveform wire that goes to a case statement. Just removing this wire causes it to function properly as can be seen by the consistency of the waveform on the front panel.

I'm using Labview 2014 (no SP1)

I'd appreciate any insight.

Solved! Go to Solution.

11-04-2015 03:07 PM - edited 11-04-2015 03:25 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

You are not taking away anything from the beginning of the array, you are removing the tail starting from a given point.

Maybe you want to wire the lenght instead of the index of "array subset" with your value instead?

(edit: Sorry, misuderstood the code.)

(What's the point of the flat sequence? Doen't seem to do anything useful. What determines you loop rates?)

11-04-2015 03:20 PM - edited 11-04-2015 03:37 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

This program (as posted) doesn't actually do anything useful. It is a stripped down version of a real program just to demonstate the array subset "bug". Everything before the index value of the array subset just goes to the bit bucket. With length unwired, the subset should be the remaining part of the array. The problem is, sometimes the end of the array is truncated also. Simply deleting that wire as indicated makes it function correctly.

The flat sequence isn't really needed, but it does really help visually demonstrate the problem if you add a frame in the middle with a ~200mS delay. You can see a new waveform added when the buffer falls below 1M samples, then a little bit disappear from the end after the subset is taken 200mS later.

11-04-2015 03:50 PM - edited 11-04-2015 03:54 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

You are adding arrays that are different in lenght, so the shortest will determine the output of the summation.

To find the lenght of the shortest of arrays, I would use something like the following:

11-04-2015 04:03 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@altenbach wrote:

You are adding arrays that are different in lenght, so the shortes will determine the output.

That makes sense for the "combined" signal graph, but that signal doesn't go back into the buffer - it's for display only.

What I'm seeing is truncation of the end of the array on Signal2, which can be seen on the Signal2 buffer waveform graph as a change in the spacing of the signal pulses immediately after the buffer initially fills up. Can you confirm that you're able to see the same problem? I had a coworker try it here and it had the same problem. We're both on LV 2014 without SP1.

11-04-2015 04:55 PM - edited 11-04-2015 04:56 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Yes, there might be a compiler error, reusing a buffer it should not.

If you insert an "always copy" as e.g. shown in the picture, the problem disappears.

I am not sure why you are constantly gettting and building all these waveform components. If you would operate on arrays (just build the waveform for the graph), it seems the problem disappears too.

11-04-2015 05:05 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I suspected that building arrays back into waveforms makes it less efficient, but I had several different sub vi's for building all different types of waveforms parametrically, and figured the waveform data type was best. I just wanted some sort of confirmation that it's either a compiler error or that I'm doing something wrong. Even though it's inefficient, I would think it should work.

11-04-2015 06:22 PM - edited 11-05-2015 01:58 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

As a workaround, use the always copy for now. I'll try to involve somebody from LabVIEW R&D to get the final word.

In any case, it seems pointless to carry along all these t0 (which is always zero!) and dt (which is always the same. Constantly going from waveforms to arrays and back just really clutters the code. If dt would differ between the waveforms, you would have a much bigger problem ;).

I understand that your real code is much more complicated and what you show is just the tip of the iceberg lettuce.

Here's a general layout draft implementing some ideas.

- Use "build array" (concatenate mode) instead of "insert into array". It's cleaner.

- Use simpler and easier to read code to find the size of the smallest array

- Use arrays exclusively. You can set dt for all graphs once.

- Use the correct representation for the buffer size controls.

- Don't place unecessary sequence structures.

- I don't think you really need the local variables, the terminal gets written often enough (saves you extra copies of huge arrays in memory!)

- Not sure what the case structure is for, but I left it in for now.

- Don't conditionally append empty arrays, just wire the array through unchanged instead.

- ...

11-06-2015 11:46 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

There are a lot of things wrong with that code (You did potentially find a compiler bug)

E.G. While this loop is running read the unwired (Constant) terminal "Signal 1" and update "Signal 1 Buffer" whith the data in "Signal 1" untell the number of data points in Signal 1 Buffer gets larger than "Buffer Size 1" at that point exit the while loop and append a null waveform. WHAT? Yup, go back and look at that again. when you wish to exit the loop you append an empty waveform. How did "Signal 1" get updated between iterations?

Then you did the same thing twice!

And, after we find a case where both waveforms out of their while loops are probably of a certain size( not hat anyone other than the user could change the WFM Size), we wait 200mSec? Why? Is there some magic reason to wait for the data to become "Stable-in-Memory" or something? Then do some math on the waveforms sizes to (get this, Either replace the value on the two graphs with updated values or the original data and iterate the outer loop) Hold it! the inner loop's conditions are already met by the data on the Shift Registers!

What did you really want to do?

"Should be" isn't "Is" -Jay

11-06-2015 12:00 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

One important way of code optimization is "inplaceness". You have a huge buffer with an upper size limit, so it would be worth exploring a solution that can keep that data fully in place.

Currently you are stripping from the head and appending to the tail of huge arrays, requiring constant reallocations and copy operations. These are expensive. (The LabVIEW compiler is very impressive, but I doubt it will be able to operate in place given the current design. Who knows?).