- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Heavy bug in "exponential fit"??

12-16-2012 11:44 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I got alot better performance by setting Parallell loops to 2, even though i have 6 cores. Is the overhead that big or some other thing going on?

/Y

12-16-2012 12:37 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Yamaeda wrote:

I got alot better performance by setting Parallell loops to 2, even though i have 6 cores. Is the overhead that big or some other thing going on?

How much is "alot"? Can you give some actual numbers as a function of input sizes? Also try to force a recompile (ctr+run) before testing.

There is definitely a parallelism overhead. This is such fast code that the overhead could be noticeable for small inputs. (Personally, I think it should not be parallelized, because, as Jim said, this code is such a small fraction of the overall fitting procedure that it does not really matter. Sometimes other stuff needs to run in parallel too, so it is not such a good idea to concertrate all available resources to one place. Herberts idea of changing the loop ordering only makes a big difference if we have a small number of parameters, which is typically the case. I am all for that change)

All that said, going from 4 to 2 parallel instances on my 4 core intel, I go from 11ms to 19ms (lenght=1000, npar=100).

Could also have to do with the CPU architecture (e.g. AMD vs Intel). cache sizes, etc. What processor do you have? I'll do some tests tomorrow on my 16 core rig... 😉

I'll rewrite the benchmark to test for parallel performance...

12-16-2012 12:44 PM - edited 12-16-2012 12:46 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

12-16-2012 01:35 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

AMD Phenom x6 1090 @ 4GHz

Running the length 10k, npar=100

Herbert is 3.87 times faster than original.

Rewrite factor vs parallell setting:

Disabled: 11.1(!)

2: 5.4

3: 3.5(!)

4: 2.6(!)

5: 2(!)

6: 1.8(!)

It seems the array functions are inheritly parallell.

/Y

12-16-2012 02:18 PM - edited 12-16-2012 02:23 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@Yamaeda wrote:

Rewrite factor vs parallell setting:

Disabled: 11.1(!)

2: 5.4

3: 3.5(!)

4: 2.6(!)

5: 2(!)

6: 1.8(!)

Sorry, I don't understand. What are the units? What do the exclamation marks mean?

You seem to have a clear inverse relation between #of cores and resulting number.

@Yamaeda wrote:

It seems the array functions are inheritly parallell.

In what sense? I don't think they should be. For big matrix operations, we can use the MASM toolkit, which e.g. parallelizes matrix operations.

12-16-2012 02:53 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The numbers are the speed factors your program shows, the Herbert version is 3.9 times faster than the original code, and your cleanup is 1.8 - 11.1 times faster than the original depending on the parallell setting. The ! denotes something interesting, like with a disables setting it's actually 3 times faster than Herberts and with 6 it's 50% as fast ...

Did that clear up the confusion?

/Y

12-16-2012 02:57 PM - edited 12-16-2012 02:59 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

The factors in my program are "x times slower than the fastest". Can you show actual times in milliseconds instead?

So your 1.8 is actually 6.2x faster than the 11.1, in agreement with a 6 core chip.

12-16-2012 05:28 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

@altenbach wrote:

The factors in my program are "x times slower than the fastest". Can you show actual times in milliseconds instead?

So your 1.8 is actually 6.2x faster than the 11.1, in agreement with a 6 core chip.

Right, but that doesn't explain why your cleaned up code is 11 times slower than the original without parallellization, that makes no sense to me.

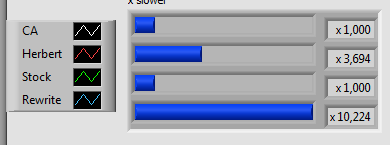

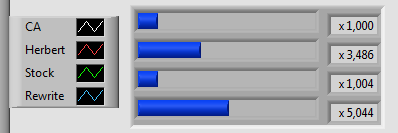

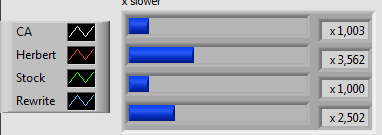

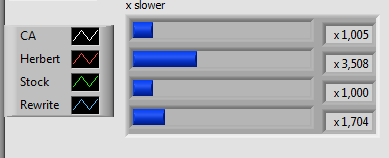

1 parallell/disabled

2 parallell

4 parallell

6 parallell

/Y

12-16-2012 08:31 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I see. Yes, that's odd. You would think it should be very similar to Herbert's code if parallelization 1, and it is on my Intel processors.

I've had bad experiences with recent AMD processors, so obviously they react differently to different kinds of code. Do you have any kind of power management enabled?

12-18-2012 02:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Yes, C1E and speedstep is active in bios. Could it be that it's tossed between cores or something? I could try and change processor affinity tonight and see if that changes things.

/Y