- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Reducing number of values

Solved!01-09-2017 10:09 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

I have a set of experiments with around 864000 values for each set. This constitues to 32 second of data (27,000 for each second). I have 12 sets of this data, that all add up to a little over six minutes.

Problem is, 864000 x 192seconds of data is too much for my current system to handle and it will take forever for me to arrange it.

What is the best way to simmer down this data to just 32 points/set? I want 27,000 values from each set to represent an average of that one second it's recorded over.

Solved! Go to Solution.

01-10-2017 01:27 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hello hmurya,

There are several options in DIAdem to deal with your data, those include merging your 12 files into one (DIAdem can handle 2 billion values per column/channel). That should be no problem for most computers to handle.

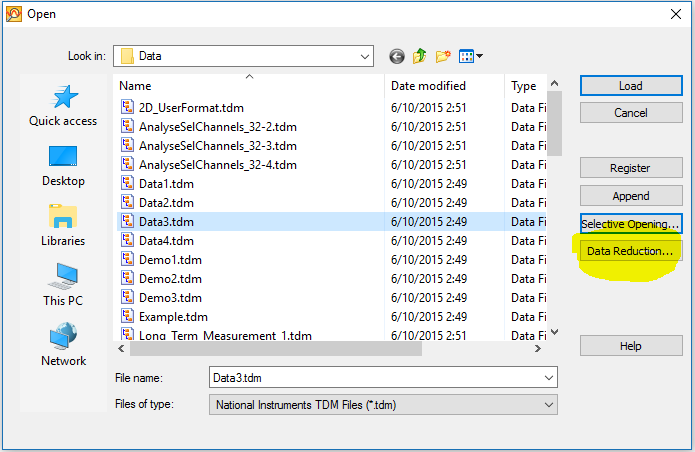

To answer your specific request, you can use the data reduction function when loading data from the DIAdem NAVIGATOR:

- In the DIAdem NAVIGATOR, pick the File > Open menu item

- In the dialog that appears, there is a button on the right labeled "Data Reduction ..."

- In the "Data Reduction" menu, pick your parameters. In your case the number of intervals should be 32, and the reduction mode should be "Mean" to get the average of each second of data

The data reduction will be executed on each channel in the file, including the time channel (if you have one).

Let me if that helps,

Otmar

01-10-2017 03:15 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Thanks a lot. Tried that and works.

My question is, will this be an authentic representation of my data?

I am trying to correlate the sound pressure data to wear on the material and I feel that an arithmetic mean will obscure my data.

For reference I have attached one part of the tdms file.

01-10-2017 04:58 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi hmurya,

First of all, the only data you need is that first channel in the TDMS file. You can delete or better yet avoid loading all the other data reduced groups (looks like SignalExpress data).

When I run an FFT on that first channel, I see frequency content out to about 4000Hz, so if you want to maintain resolution all the way out to 4 kHz, then you can only resample down to 8000 samples per second (from your current rate of about 25600 samples per second). This would still give you a 3x smaller footprint with effectively no signal content loss. Though you should not average but instead choose the DIAdem "Resampling" function.

If for example you only cared about the frequency content out to 2kHz, you could resample down to 4kHz, making a 6x smaller footprint.

Brad Turpin

DIAdem Product Support Engineer

National Instruments

01-30-2017 10:58 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

That sounds something that would help. But my main issue still stands. I have wear data for every other second. And I need to correlate these values to that wear data.

So sampling down to that much would still not give me any proper correlation value.

01-31-2017 01:35 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi hmurya,

Your initial post stated that you were not able to load 12 such TDMS files containing 32 seconds of data each. Yet I can very quickly load 12 of the TDMS files you sent containing 34 seconds of data and concatenate them into one channel. I can also very quickly graph this 10,444,800 value channel in VIEW.

DataFilePath = "D:\NICS\Discussion Forums\hmurya\Sound_Pressure.tdms" Call Data.Root.Clear Call DataFileLoadSel(DataFilePath, "TDMS", "[1]/[1]") Set Group = Data.Root.ChannelGroups(1) Set AppChannel = Group.Channels(1) FOR i = 1 TO 11 Set NewChannel = DataFileLoadSel(DataFilePath, "TDMS", "[1]/[1]") Call ChnConcat(NewChannel, AppChannel) Call Group.Channels.Remove(2) NEXT ' i

It's not clear to me what meaningful metric you would get from averaging 1 second of pressure data that is largely sinusoidal. Would perhaps the 1 second RMS value be pertinent here? What are you measuring the pressure of, exactly? Is this "wear" data you speak of the pressure data you sent or a second data set which I haven't seen yet?

Brad Turpin

DIAdem Product Support Engineer

National Instruments

02-01-2017 03:18 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Erm. I will try to make this as clear as possible.

The same experiment was conducted 10 times. And each time there are 12 sets of data. Each set is around 32 seconds each and the next set builds up on the previous set. I have uploaded two sound pressure tdms files to show the progression: 01, 02

Now for each set I have the two following values:

- wear track width

- volume loss with track width

Which means at the end of the day I will have 12 values, 1 for each set.

So for the two sound files I have attached there are the values:

Enter wear track width (or nonpositive number to stop):0.321 The volume loss with track width 0.321 = 0.368 Enter wear track width (or nonpositive number to stop):0.476 The volume loss with track width 0.476 = 1.200

So what I ultimately need to do is, link each tdms file to its corresponsing volume loss.

What I don't understand is how to boil down each tdms file (864000 values) into a single value that will accurately represent the wear.

Because I don't know what else can I do with this sound data when I don't have enough values of the wear data.

Apologies if this sounds too dumb, I can't seem to figure out a solution to this.

02-01-2017 07:16 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi hmurya,

Your application is slowly coming into focus for me. I should have noticed that the name of the TDMS file you posted initially had the word "sound" in it. Have you tried replaying the sound along with the waveform in VIEW?

Is it your contention that the intensity of the recorded sound is related to the wear? If so, then I suggest the metric of the RMS value of the sound signal for each second. If you agree, then I can send you a little VBScript that calculates an RMS value for each elapsed second.

The simplest way to connect the two measured values per data file with the data file is to save them as new file properties. We can do that along with the RMS values so that you can trend those parameters with the DataFinder.

Brad Turpin

DIAdem Product Support Engineer

National Instruments

02-01-2017 07:45 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi.

Yes that's precisely what I want. The intensity should correspond to the wear.

I'll try to do that in VIEW once I get back to the PC that has the software.

I was thinking of RMS too, but unfortunately could not figure out how to get those RMS values for each second as I am still learning this software.

If it's of any help, then I also have acceleration based tdms.

I would really appreciate it if you could share the script.

Thank you

02-01-2017 04:11 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Hi hmurya,

Here's a script that calculates the RMS value for each second. It uses the Statistics command with a specific row range for each second and writes the results to a new channel.

DataFilePath = "D:\NICS\Discussion Forums\hmurya\Sound_Pressure.tdms"

Call Data.Root.Clear

Call DataFileLoadSel(DataFilePath, "TDMS", "[1]/[1]")

Set Group = Data.Root.ChannelGroups(1)

Set DatChannel = Group.Channels(1)

Set RmsChannel = Group.Channels.Add("RMS", DataTypeChnFloat64)

FOR n = 1 TO 23

StatSel(n) = "No"

NEXT ' n

StatSel(7) = "Yes" ' Root mean square

StatResChn = FALSE

StatClipCopy = FALSE

StatClipValue = FALSE

iMax = DatChannel.Size

Start = DatChannel.Properties("wf_start_offset").Value

Delta = DatChannel.Properties("wf_increment").Value

Call ChnWfPropSet(RmsChannel, "Time", "s", (Start+1)/2, 1)

SecVals = 1/Delta

FOR i = 1 TO iMax Step SecVals

k = k + 1

P1 = i

P2 = CStr(i+SecVals-1)

IF P2 > iMax THEN P2 = iMax

RowRange = P1 & "-" & P2

Call StatBlockCalc("Channel", RowRange, DatChannel)

RmsChannel(k) = StatSqrMean

NEXT ' Until end of channel

Brad Turpin

DIAdem Product Support Engineer

National Instruments