- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Darren's Weekly Nugget 10/19/2009

10-19-2009 02:04 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Today I'm going to talk about removing block diagrams from VIs. You can save a VI without its block diagram such that the source code for the VI is no longer included in the .vi file. The manner in which you interactively save a VI without its block diagram has changed over the years:

- LabVIEW 7.x and previous - Choose File > Save with Options > Custom Save > Remove diagrams

- LabVIEW 8.0 and 8.2 - Create a Source Distribution build specification, then go to Source File Settings > Customize VI Settings > Remove Diagram

- LabVIEW 8.5 and later - Create a Source Distribution build specification, go to Source Files and add your VI to the distribution, then go to Source File Settings, uncheck Use default save settings, and check Remove block diagram

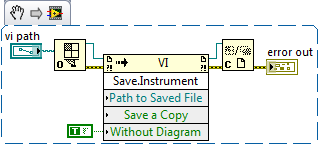

If you're doing this programmatically (i.e. you just want to save all the VIs in a folder without block diagrams), it's pretty easy to do with VI Server:

So why would you want to save a VI without its block diagram? Here are some pros and cons for diagram removal:

Pros

- The source code is no longer present in the VI. This means that nobody, no matter how hard they try, can access the diagram behind the VI...because it's not there.

- The file size on disk is reduced when a VI's block diagram is removed.

- Since the file size on disk is smaller, a VI without a diagram will load faster.

Cons

- The source code is no longer present in the VI. 🙂 As a security measure, this is good. But if you neglect to back up your code, this could be very bad.

- The VI is only loadable in the version of LabVIEW that was used to remove its diagram. We need the diagram in order to recompile a VI, and a recompile is required whenever loading a VI from a different version of LabVIEW.

- The VI is only loadable on the platform (i.e. Windows, Mac, Linux) that was used to remove its diagram. Same reason as above.

- The VI with its diagram removed will become broken if you change the connector pane of any subVI that it calls.

- The VI with its diagram removed will become broken if you change the inplaceness of any of its subVI inputs.

So with all this in mind, removing the diagrams of your VIs is generally discouraged. However, if you have platform-specific, version-specific code that must ship as VIs (as opposed to an EXE), and your end users have no need to see or modify the code, then you would see some file size and performance benefits from removing block diagrams. If this is not your use case, then you're probably better off password-protecting your VIs or building them into an EXE.

10-20-2009 09:03 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Darren wrote:...

Cons

- The source code is no longer present in the VI. 🙂 As a security measure, this is good...

Nice nugget, Darren, thanks.

Scripting-based LabVIEW disassembler (which will be able to recover block diagram from the VI where BD was removed) still in my dreams...

Andrey.

10-21-2009 05:43 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Using 7.1.1, 8.5.1, 8.6.1, 2009 on XP and RT

Don't forget to give Kudos to good answers and/or questions

10-27-2009 09:46 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Darren wrote:

Pros

- Since the file size on disk is smaller, a VI without a diagram will load faster.

As a cross platform advocate I strongly agree about the disadvantage of remove a block diagram. Relying on the encryption of a password is probably equivalent in 99% of the cases. Is that 1% case really enough to overcome the disadvantages?

But on to the quote above. Since only the code segment is loaded from a VI until the BD is opened does it really load faster with the BD removed? The VI just looks at a pointer to the Code segment of the file and loads that. I believe that the BD is not loaded or accessed unless one of the items in the "Con" list is true forcing a recompile.

Has anyone documented the actual increase in VI loading speed with the diagram removal. I am a bit skeptical here. Of course that really reduces the number of "pro" items in this nugget.

10-27-2009 11:03 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Yes, as a general rule, a VI file on disk will take longer to load if the file is larger, regardless of which part of the file is actually being accessed . A while back I shipped a product with block diagrams removed purely because there was about a 15% load time improvement over the exact same codebase with diagrams retained.

10-27-2009 11:25 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Did I read that correctly? 15% load time improvement by simply removing the block diagram?

Compiling (with BD) into an exe gets you the same improvement, right?

10-27-2009 11:30 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

10-27-2009 11:50 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Yes.. I could see that when loading hundreds of VI's. And thus the need for the routine you provided at the top. 😉

And yes.. Make really sure that the entire project is backed up before doing so. (you're courageous)

10-27-2009 04:43 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

Ray.R wrote:Yes.. I could see that when loading hundreds of VI's. And thus the need for the routine you provided at the top. 😉

I still can't see it. Disks are now random access so you read the header of the VI, it has a pointer to the start and length of the code and you load the code into memory. The larger file size should have no effect. Even for 100s of VIs. The load system still has to jump from code segement to code segment for each VI.

Removing the diagram should be just that, it shouldn't put extra linking info into the code or change the code?

If there is that much speed improvement it means to me that something is wrong! Or I am horribly misunderstanding the concept. It still has to check for changes to sub-vis the only difference is if the system does have to compile or simply break the VI. But in the unbroken case the speeds should not be radically different.

What conceivably could the system be doing with that 15% extra time?

10-27-2009 07:00 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report to a Moderator

When you load a VI, the operating system first loads the entire VI file into the disk cache. Then, the program is free to randomly access the bits, once they are loaded into memory. If the VI file is smaller, then the step of loading the file into the disk cache is faster.

If your VI has a block diagram, but you never load it, the operating system will still load the entire file into the disk cache. That is the step that gates your performance.

As it turns out, a large portion of the time LabVIEW spends loading VIs is waiting for the file to finish loading into the disk cache, and a comparably short time is spent manipulating those bytes in memory. The single best indicator of file load time is file size. When we try to optimize file load time, we always end up optimizing file size, because very little else would be effective.

Why do operating systems behave this way? Why load an entire file into the cache, instead of waiting until specific bytes are requested? Latency. While hard drives have very good bandwidth, they still have to wrestle latency.

Bottom line: if you want your VIs to load as fast as possible, make them as small as possible. Get rid of your diagram if you can.